MVP Development for Startups: A Complete Guide

You’re probably sitting with a product idea that feels bigger than your current budget, team, and runway.

That’s normal. Founders rarely struggle because they lack ideas. They struggle because the first version keeps expanding. A workflow here, an admin panel there, a dashboard because investors may ask for it, and maybe an AI feature because the market expects one. Soon, the “first release” looks like a year-long program.

That’s where disciplined MVP development for startups changes the conversation. An MVP is not a stripped-down app built to save money at all costs. It’s a business test. It helps you find out whether the problem is real, whether users care enough to change behavior, and whether your team should keep investing.

The modern twist is that AI now belongs across the lifecycle, not just inside the product. Used well, it sharpens discovery, speeds engineering, improves testing, and helps teams learn faster after launch.

Why MVP Development is Your Startup’s Lifeline

A first-time founder often thinks the biggest risk is shipping too little. In practice, the bigger risk is shipping too much, too late, and learning nothing useful.

An MVP protects you from that. It forces one hard question: what is the smallest product that delivers a real outcome for a real user? If you can’t answer that clearly, coding won’t save you.

The case for this approach is strong. Approximately 72% of startups employ an MVP approach, which matters in a market where 90% of startups fail overall, and 34% fail because of poor product-market fit. That isn’t just a process preference. It’s a risk management method.

What founders usually get wrong

Most failed MVPs don’t fail because the team couldn’t code. They fail because the team validated the wrong thing.

Common examples:

-

They test features, not demand. A polished interface can still solve a problem nobody urgently wants solved.

-

They confuse internal excitement with market validation. Founder’s conviction isn’t customer proof.

-

They delay the launch to add reassurance features. Reporting, roles, settings, and edge-case handling often arrive before the core value loop works.

Practical rule: If your roadmap has many features but no sharp hypothesis, you’re building output, not evidence.

Why AI changes the method

In earlier startup cycles, teams treated AI as a future enhancement. That’s outdated. AI can support customer research, synthesize interview notes, highlight usage patterns, speed prototyping, assist developers, and later power the product itself.

That shift matters because an MVP’s primary purpose isn’t to impress. It’s to shorten the distance between assumption and evidence.

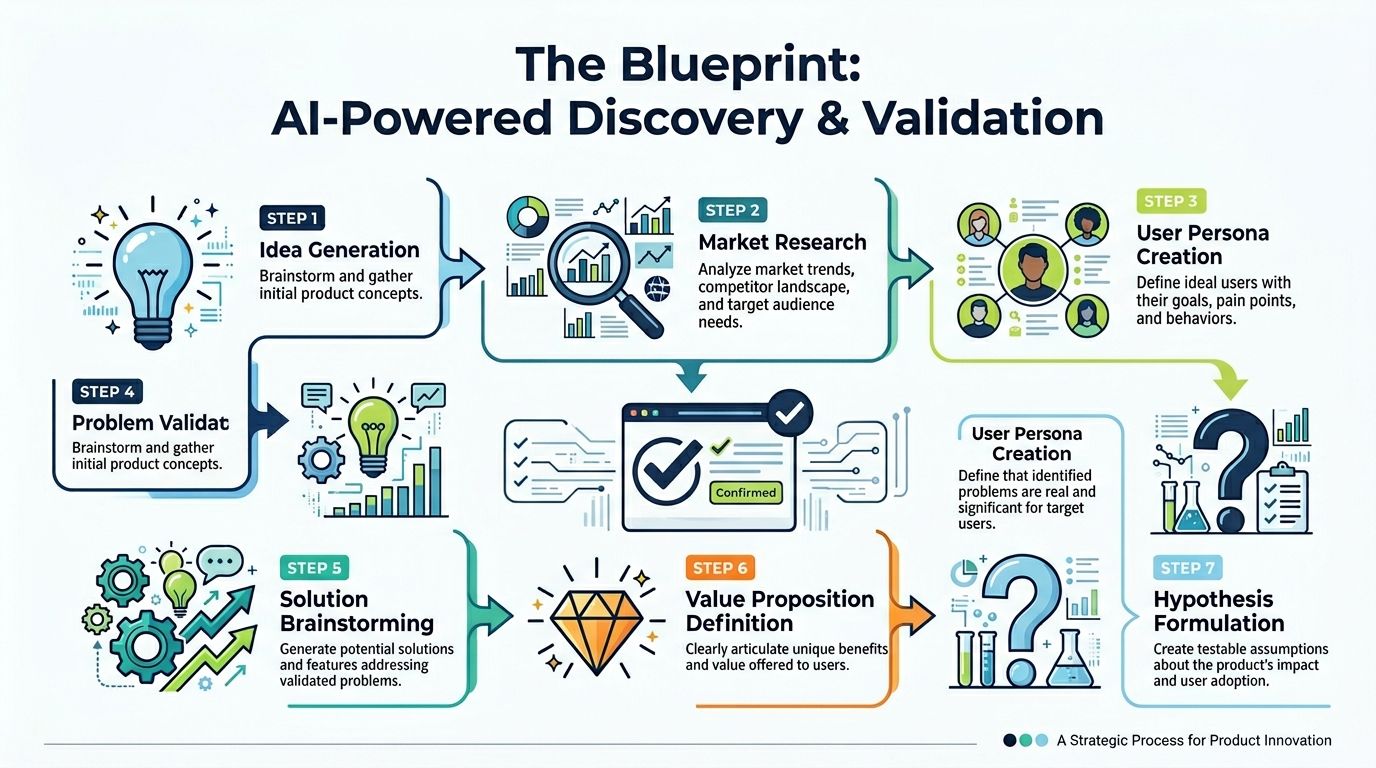

The Blueprint AI-Powered Discovery and Validation

Before code, there’s a quieter phase that decides whether the build has a chance. Here, good founders separate a promising idea from an expensive distraction.

AI is most useful here when it helps a team think better, not when it pretends to replace judgment. Discovery still needs interviews, market context, and uncomfortable prioritization. What AI adds is speed, synthesis, and better pattern recognition across messy inputs.

Start with the problem, not the feature

A lot of startup ideas are solution-led. “We’ll build an AI assistant for X.” “We’ll use ML to automate Y.” That framing is seductive and weak.

A better starting point is narrower:

-

Who is stuck right now

-

What task keeps breaking

-

What workaround do they currently tolerate

-

Why is that workaround no longer acceptable

If the pain is vague, AI won’t rescue the idea. It will only help you produce vague documents faster.

Run an AI-assisted discovery workshop

A structured workshop creates alignment before anyone debates frameworks or vendors. Teams use it to turn intuition into testable assumptions.

A practical workshop usually covers:

-

Market signals such as recurring complaints, workflow bottlenecks, and buyer urgency

-

User roles because the user, buyer, and approver are often different people

-

Current substitutes including spreadsheets, email chains, WhatsApp groups, internal tools, and manual operations

-

Potential AI advantage where automation, summarization, prediction, or recommendation could create genuine value

This is also the right moment to check organizational readiness. A founder may want AI in the product, but the business may lack usable data, governance, or the right internal process. A structured AI readiness assessment helps identify those gaps before they become architectural or compliance problems.

Use AI to compress the research loop

AI can accelerate research in ways that are practical, not theatrical.

Competitor review

Founders often review competitors manually and stop at the homepage messaging. That misses the important layer. You need to compare positioning, onboarding friction, workflow depth, and what users complain about after signing up.

AI helps by clustering reviews, support themes, feature patterns, and pricing language. It won’t tell you what to build. It will help you see where the market is crowded, shallow, or poorly served.

Interview synthesis

After customer interviews, teams usually sit on notes that never become decisions. AI can transcribe, summarize, and group repeated pain points, objections, and desired outcomes.

That’s useful when you have multiple stakeholders hearing different things. It gives the team a shared evidence base.

Hypothesis drafting

Once patterns emerge, AI can help turn them into simple product hypotheses. For example:

-

If operations managers lose time compiling weekly reports, then auto-generated summaries may reduce manual effort.

-

If support teams repeat the same answers, then an AI-assisted response layer may improve response quality and consistency.

-

If buyers hesitate because the setup feels heavy, then a guided onboarding assistant may reduce early drop-off.

These are not promises. They’re testable assumptions.

Define a founder-grade validation pack

By the end of discovery, you don’t need a giant requirements file. You need a compact decision set.

A useful validation pack includes:

| Item | What good looks like |

|---|---|

| Problem statement | One painful, specific problem in plain language |

| User persona | Clear primary user with context, constraints, and motivation |

| Current workaround | What they use today and why it falls short |

| Core promise | One outcome the MVP must deliver |

| AI role | Where AI improves speed, quality, or usability |

| Testable hypothesis | A statement the MVP can validate or reject |

The best discovery output is not documentation. It’s shared clarity.

Don’t ignore the funding angle

Good discovery also sharpens your fundraising story. Investors don’t just back code. They back a sharp understanding of the problem, the market, and the path to traction.

If you’re building an AI-first product, it also helps to understand who invests in that category and stage. A curated list of AI early-stage investors can be useful once your hypothesis and target market are tight enough to present credibly.

What validation should produce

A strong discovery phase leaves you with conviction, but not the reckless kind. It should give you:

-

A defined problem worth solving

-

A specific early user

-

A narrow MVP promise

-

A plausible AI use case

-

A shortlist of assumptions to test first

That’s enough to scope intelligently. Anything beyond that is often comfort work.

From Idea to Actionable Scope: Prioritizing for Impact

The hardest part of MVP development for startups isn’t deciding what could go in. It’s deciding what must stay out.

Founders usually arrive at scoping with a discovery deck, a list of interview insights, and a product vision that already feels larger than the first release should be. Discipline matters most at this point. A good scope protects the learning goal. A bad scope protects everyone’s preferences.

MVP versus MLP

Founders often blend two different ideas.

An MVP proves viability. An MLP aims to create early affection. Both matter, but they serve different moments.

Choose MVP when

-

You’re still testing whether the problem is urgent

-

The workflow is operational rather than emotional

-

You need evidence before committing more budget

-

The product depends on behavior change you haven’t observed yet

Choose MLP when

-

The category is crowded, and the experience itself is the differentiator

-

Trust, brand feel, or adoption friction is central

-

Early users have alternatives and low switching pain

-

You already know the problem is real and needs a stronger pull

Most first-time founders should start closer to MVP. Not because delight doesn’t matter, but because unvalidated delight is expensive.

A lovable product nobody needs is still a failed product.

Scope around one critical user journey

Scoping gets easier when you stop discussing features in isolation. Start with the smallest end-to-end journey that proves value.

For a B2B reporting tool, that might be:

connect data, generate summary, review output, share report

For a marketplace, it might be:

discover item, request access, confirm transaction

For an internal AI assistant, it could be:

ask a question, retrieve a trusted answer, take the next action

If a feature doesn’t improve that core path, it probably belongs later.

Use three lenses, not one

No single framework is enough. The best teams combine them.

User story mapping

This helps everyone visualize the workflow from the user’s point of view. It exposes hidden complexity fast.

A typical map shows:

-

Activities the user is trying to complete

-

Tasks beneath each activity

-

Release slices that separate the launch scope from later improvements

It’s one of the simplest ways to discover that a “small” feature drags in permissions, notifications, fallback states, and admin handling.

MoSCoW prioritization

This framework works well once the story map exists.

-

Must-have items are necessary for the core outcome

-

Should-have items improve flow but aren’t required at launch

-

Could-have items are worthwhile only after real usage

-

Won’t-have yet items are explicitly deferred

The power of MoSCoW is the last category. Teams need permission to say no in writing.

RICE scoring

RICE adds another layer when the backlog becomes political. It helps compare opportunities through practical trade-offs such as likely reach, expected impact, confidence, and implementation effort.

Use it carefully. It’s a decision aid, not a substitute for product judgment. If the scoring session turns into theater, return to the core user journey.

Limit the MVP to the smallest credible feature set

One of the clearest practical guidelines available is this: lean MVPs can cut development costs by 40-60% by focusing on just 3-5 core features, while 68% of MVPs fail due to unvalidated ideas or improper metrics.

That should shape your scoping behavior. A long feature list doesn’t reduce risk. It increases it.

Prototype before you commit engineering time

Founders sometimes treat design as decoration. In early product work, design is a scoping tool.

A rough prototype in Figma is often enough to answer important questions:

-

Does the user understand the first action?

-

Is onboarding too heavy?

-

Does the AI feature need an explanation to feel trustworthy?

-

Are users trying to do something the flow doesn’t support?

A clickable prototype is much cheaper to correct than a built interface. This matters most in AI-assisted products, where users need clarity about what the system knows, what it generates, and what still requires human review.

What usually doesn’t belong in version one

This list changes by product, but founders commonly over-prioritize the same things:

-

Advanced admin tooling before the main workflow works

-

Complex permissions before team usage is proven

-

Extensive analytics dashboards before there’s meaningful activity to analyze

-

Multi-channel integrations before one channel produces value

-

Heavy personalization before the base experience succeeds

A smarter route is to keep the data model and architecture flexible enough to support those later, without forcing them into the launch scope.

A practical cutoff test

When a feature is debated, ask three questions:

| Question | If the answer is no |

|---|---|

| Does it help the user reach the core outcome? | Cut it |

| Does it help us validate a key assumption? | Defer it |

| Would early users refuse the product without it? | Probably not MVP scope |

That test isn’t elegant. It works.

Assembling Your Build Tech Stack Team and Architecture

A validated scope still doesn’t guarantee a good MVP. Plenty of startups choose the wrong stack, the wrong team model, or an architecture that slows them down from sprint one.

At this point, product decisions become operational. The question is no longer “what should exist?” It becomes “how do we build this without creating a fragile mess?”

Pick a stack for speed now and flexibility later

Early-stage founders often overcorrect in one of two directions.

One group over-engineers for a scale they don’t have. The other chooses the fastest possible tools without considering future constraints. The right answer is usually in the middle.

For most MVPs, stack selection should reflect:

-

Team familiarity

-

Delivery speed

-

Ease of iteration

-

Integration readiness

-

Future AI support

-

Maintainability under pressure

A practical modern stack often includes a frontend framework such as Next.js, a backend layer that can expose clean APIs, and a database design that won’t collapse when you add analytics, permissions, or AI-generated artifacts later.

Architect for AI, even if AI is light at launch

A lot of founders think AI architecture matters only if the product already includes machine learning. That’s too narrow.

Even if version one only includes a modest AI function, such as summarization, search assistance, or report generation, the architecture should anticipate:

-

Prompt orchestration

-

Auditability of generated output

-

Human review steps

-

Storage of input and output artifacts

-

Model switching over time

-

Usage monitoring and fallback behavior

That doesn’t mean building a giant AI platform upfront. It means avoiding dead ends.

A good rule is to keep AI capabilities behind clear service boundaries so you can update providers, prompts, or validation logic without rewriting the whole product.

AI also changes how the team builds

The software delivery process itself has changed. McKinsey predicts that 72% of organizations will deploy generative AI at scale by 2026, and integrating AI early can create an advantage. The same source also notes that AI coding tools can reduce pull request cycle times by 75%, according to Modall’s 2026 MVP development analysis.

Those gains are real when used correctly. They’re dangerous when used lazily.

Where AI helps engineering teams

-

Boilerplate generation for routine patterns

-

Test scaffolding that gives QA a stronger starting point

-

Documentation support for APIs and internal handoffs

-

Code review assistance that flags common issues early

Where human oversight still matters most

-

Security-sensitive logic

-

Data modeling

-

Architecture boundaries

-

Performance bottlenecks

-

Compliance-sensitive workflows

-

Anything customer-facing that can create trust issues

AI can accelerate implementation. It can’t own accountability.

Team model choices that fit startups

The team structure matters as much as the codebase. Founders usually evaluate three models.

In-house team

This gives you the strongest internal ownership. It also takes longer to recruit, onboard, and align.

Best fit when engineering is the core strategic asset from day one, and you already have strong product and technical leadership.

Freelancers

Freelancers can work on sharply bounded tasks, prototypes, or specialist help. Problems start when the product requires shared architecture decisions, continuity, and coordinated delivery across design, backend, QA, and release management.

This route often looks cheaper than it is.

Dedicated development partner

A dedicated team works best when the startup needs coordinated execution, cross-functional discipline, and faster movement than internal hiring allows. It’s especially useful when the product touches AI, cloud architecture, or regulated workflows.

If you’re comparing options, this guide to hiring remote developers is useful because it frames the operational realities, not just the cost discussion.

For founders planning beyond the MVP, it also helps to think in terms of extensibility and ownership, which is where broader experience in custom enterprise software development becomes relevant.

Keep the first architecture boring where possible

Founders sometimes feel disappointed by this advice, but boring architecture ships.

For many MVPs, that means:

| Decision area | Better early choice | Riskier early choice |

|---|---|---|

| Service design | Modular monolith | Premature microservices |

| Data flow | Simple, traceable APIs | Over-layered orchestration |

| Deployment | Standard cloud pipeline | Bespoke infrastructure |

| AI integration | Isolated service wrappers | Hardcoded logic across app |

| Analytics | Event tracking on key flows | Dashboards for everything |

The boring path is easier to test, easier to debug, and easier to hand over when the team grows.

Launch and Learn QA Compliance Rollout and Metrics

A startup doesn’t earn anything from code sitting in staging. Launch is where the product starts telling the truth.

That truth is often uncomfortable. Users skip onboarding. They misunderstand your AI feature. They love one narrow workflow and ignore the rest. That’s not failure. That’s the whole point of the MVP.

QA for startups has to be lean and intentional

You don’t need a heavyweight testing bureaucracy. You do need a repeatable release discipline.

A practical MVP QA process checks four things before launch:

-

Core path stability

The main value loop has to work consistently. If users can’t complete the core task, nothing else matters. -

Known edge cases

Focus on the likely breakpoints. Invalid inputs, failed uploads, empty states, payment interruptions, timeout behavior, and permission confusion. -

Device and browser sanity checks

Test the environments your early users use. Broad compatibility theater is a waste if your first customers all work in one setup. -

AI output review

If the product generates content, recommendations, or summaries, review for accuracy, tone, and failure handling. Bad AI behavior damages trust fast.

Compliance belongs in MVP thinking, not post-MVP cleanup

Founders in healthcare, finance, and insurance often make the same mistake. They postpone compliance conversations because “this is only the MVP.”

That assumption creates rework. Regulated products should launch lean, but not carelessly.

A practical pre-launch checklist usually includes:

-

Data handling review, so you know what user information is collected and why

-

Access controls that reflect real roles rather than ad hoc account sharing

-

Audit awareness for critical actions and generated outputs

-

Vendor review for AI services, hosting, analytics, and communication tools

-

Retention and deletion logic appropriate to the product context

If your startup operates in a sensitive domain, this broader view of compliance-first software development is worth factoring in before release decisions harden.

The cheapest moment to think about compliance is before you build the wrong dependency into the product.

Rollout should be phased, not theatrical

A public launch can wait. Early release works better when it’s structured.

Friends-and-family alpha

This phase is good for catching obvious breakage and confusing copy. It is not a reliable source of market validation. People close to you are too generous.

Controlled beta

A narrower external group is where significant signals start. Pick users who match the intended persona, not just people who are easy to recruit.

Useful goals in beta include:

-

Confirming the core workflow is understandable

-

Observing where users hesitate

-

Watching how they interpret the AI layer

-

Collecting objections in their own words

Gradual public release

Only expand distribution once the product is stable enough that new users can complete the main journey without human rescue every time.

For AI-enabled products, staged rollout is even more important. You may need tighter monitoring, stronger guardrails, or manual review behind the scenes before scaling access.

Measure behavior, not vanity

Founders often ask which analytics stack to use before they decide what matters. That order should be reversed.

Your metrics should match the hypothesis you set during discovery.

A useful MVP measurement setup tracks:

-

Acquisition source, so you know where early users came from

-

Activation events that mark the first value

-

Drop-off points in onboarding or setup

-

Repeat usage around the core feature

-

Support themes because complaints often reveal mis-scoped assumptions

-

AI-specific quality signals such as acceptance, edit behavior, or override patterns

This doesn’t require a huge data warehouse. Tools such as Mixpanel, Amplitude, product event logs, support tagging, and session replay can give enough signal early on if the event plan is thoughtful.

Build a learning loop into the release cycle

A healthy post-launch rhythm looks like this:

| Weekly activity | Why it matters |

|---|---|

| Review product events | Shows what users do |

| Read support conversations | Exposes friction in plain language |

| Check AI outputs | Protects trust and identifies patterns |

| Prioritize fixes and refinements | Keeps the roadmap grounded in evidence |

| Revisit assumptions | Stops the team from clinging to outdated beliefs |

If the launch process doesn’t feed decisions back into the backlog, the MVP becomes just another small product release. That misses its real value.

Navigating Realities, Costs, Timelines, and Common Pitfalls

A founder approves six extra features in week two because each one sounds reasonable. By week eight, the team is still debating edge cases, the AI feature has no clear training or prompt strategy, and the runway is thinner than anyone expected. That is how many MVPs drift off course. Not through one big mistake, but through a series of small decisions that break the original purpose of the product.

Cost matters. So do timelines. But the harder problem is discipline.

An MVP only reduces risk when it is built to answer a small number of high-value questions. Bridge has seen this repeatedly across two decades of product delivery. Teams get better outcomes when they treat budget, scope, data readiness, and AI behavior as one operating system, not four separate conversations.

The mistakes that keep repeating

Some startup failures look unique from the outside. Underneath, the pattern is familiar.

The product goes into development before the team agrees on what must be proven. AI gets added because the market expects it, not because the workflow improves. Founders ask for “just one more feature” to protect against user disappointment, then lose the speed that makes an MVP useful in the first place.

The expensive part is rarely writing code alone. It is building the wrong thing, instrumenting too little, or discovering late that the AI workflow needs cleaner data, human review, or tighter controls.

Five pitfalls that deserve blunt attention

Vague success criteria

If the team cannot name the decision this MVP is meant to support, every outcome becomes debatable.

“People liked it” is not enough. “Some users engaged” is not enough either. Define specific signals before the first sprint starts. For AI-backed features, that also means agreeing on acceptable output quality, fallback behavior, and what human intervention looks like when confidence is low.

Scope creep disguised as prudence

This is one of the fastest ways to burn time.

The requests usually sound harmless. Add reporting. Add permissions. Add a second workflow so early users see the bigger vision. Each request has logic behind it. Together, they slow down delivery and blur the test.

Strong MVP teams protect a narrow scope with intent. They do not confuse future roadmap items with launch requirements.

AI used as a badge, not a lever

AI earns its place in an MVP when it improves speed, accuracy, relevance, or decision quality for a defined task.

A useful first version might summarize support tickets, classify documents, rank leads, or assist users within a constrained workflow. A weak first version tries to act like a full product brain from day one. That drives up cost, raises QA effort, and creates trust issues if outputs are inconsistent.

Bridge integrates artificial intelligence at every stage of the software development lifecycle to simplify workflows, predict risks, and improve quality. That starts in discovery, where AI helps analyze user interviews, cluster patterns, and test assumptions faster. It continues in delivery, where teams can use AI for code assistance, test generation, defect prediction, and product analytics. The point is not to add AI everywhere. The point is to use it where it shortens learning cycles or strengthens the product itself.

Weak feedback loops

Useful feedback rarely arrives in one channel.

Sales hears objections. Support hears friction. Product sees drop-off. Founders hear ambition from early adopters that may not match actual usage. Those signals need to be reviewed together, or the team will optimize for the loudest voice instead of the clearest pattern.

This matters even more with AI features, because user trust shows up in behavior. Edits, overrides, retries, abandonment, and escalation requests often tell you more than survey responses.

Underestimating post-launch work

Launch is the start of the learning bill, not the end of the build bill.

After release, teams still need bug fixing, UI cleanup, prompt or model adjustments, analytics corrections, support response handling, and backlog reshaping. AI-based MVPs add another layer. Output quality has to be reviewed, edge cases need containment, and costs may need tuning based on actual usage.

Founders should budget for learning after launch, not just coding before launch.

What a realistic budget range looks like

The right budget depends on product complexity, integration needs, regulatory exposure, and the extent to which AI is integrated into the core experience.

| MVP Complexity | Cost Estimate | Timeline Estimate |

|---|---|---|

| Simple no-code MVP | Lower cost | Shorter timeline |

| Medium custom MVP | Moderate cost | Medium timeline |

| Complex AI or fintech MVP | Higher cost | Longer timeline |

These are direction-of-travel ranges, not quotes. A no-code prototype can validate a workflow quickly, but it may limit control over data, integrations, and AI behavior. A custom MVP costs more, yet it gives the team more ownership over architecture, instrumentation, and future iteration. AI-heavy or fintech products usually sit in a different category because they carry model costs, compliance review, data preparation work, and stricter QA requirements.

How to interpret those ranges

A simple no-code MVP fits early validation of a user journey, internal process, or lightweight marketplace interaction. It is less useful when your product depends on proprietary logic, unusual integrations, or ML features that need tighter control.

A medium custom MVP is often the practical middle ground. It gives startups enough engineering flexibility to build the right foundations without overbuilding for scale too early.

Complex AI or fintech MVPs need more planning because uncertainty exists in two places at once. Product risk is still high, and technical risk is higher. The mistake is not spending more. The mistake is pretending that the extra work around data, observability, compliance, and trust can be skipped.

Timelines should create urgency, not fantasy

A good MVP schedule creates pressure to decide. It does not create pressure to pretend.

If the timeline keeps slipping, look at the cause instead of accepting a vague delay:

-

Too many stakeholders are shaping the scope

-

Too many features are being treated as launch-critical

-

Architecture is being designed for future scale instead of present learning

-

Compliance concerns surfaced late, instead of during discovery

-

The AI capability was promised before data access, evaluation criteria, or human-review paths were ready

Bridge follows a consultative approach, including discovery and strategy, specific recommendations, AI-driven development, and ongoing support, to keep those issues visible early. That is usually the difference between an MVP that teaches the business something useful and one that only consumes runway.

If you’re weighing what to build first, how much AI belongs in version one, or how to move from idea to a testable product without wasting runway, Bridge Global can help you shape the right MVP path. Their team brings two decades of agile delivery and AI-driven product development to the work of discovery, validation, engineering, and scale.