Your Guide to Healthcare Interoperability Software Development

What if a patient’s health record wasn’t one complete story, but a collection of scattered pages held by different people in different cities? That’s the reality for most patients today. Their story is fragmented: a page with their cardiologist, a chapter with their primary care doctor, and a few scribbled notes from an emergency room visit on vacation.

No one has the full book. This is the fundamental, often dangerous, problem that healthcare interoperability software development is built to solve. It’s not just about tech; it’s about piecing together a person’s complete health narrative so clinicians can make the best possible decisions.

Why Connected Healthcare Can No Longer Wait

Think of it as building the digital bridges that connect isolated islands of data. When these islands are separate, the view of the patient is incomplete, creating a risky environment for care.

The High Cost of Disconnected Data

When systems can’t talk to each other, the consequences ripple through the entire healthcare ecosystem. This isn’t just an inconvenience; it has a very real, very high cost.

-

Medical Errors: A doctor without a full patient history is flying blind. They might prescribe a medication that clashes with another or miss a critical allergy note, leading to preventable harm.

-

Wasted Resources: How many times has a patient had the same MRI or blood test done simply because their new doctor couldn’t access the old results? This redundancy costs the U.S. healthcare system billions every year.

-

Frustrated Patients and Providers: Patients are forced to become manual data carriers, repeating their medical history endlessly. Meanwhile, clinicians burn valuable time on administrative detective work instead of focusing on their patients.

True interoperability is not just about connecting systems; it’s about creating a single, continuous, and comprehensive narrative of a patient’s health journey. It transforms fragmented data points into life-saving insights.

This table highlights the stark contrast between a disconnected system and an interoperable one, showing how these solutions directly address everyday healthcare frustrations.

From Data Silos To Seamless Care

| Healthcare Challenge | Interoperability Solution |

|---|---|

| Fragmented Patient Records | A single, unified view of a patient’s health history, accessible to all authorized providers. |

| Redundant Tests & Procedures | Real-time access to prior lab results, imaging, and clinical notes, reducing waste. |

| Poor Care Coordination | Seamless communication between specialists, primary care, labs, and pharmacies. |

| Patient Data Inaccessibility | Empowered patients with secure, easy access to their own health information via patient portals and apps. |

Ultimately, building these connections moves healthcare from a reactive, fragmented model to a proactive, coordinated, and truly patient-centered one.

A Market at a Tipping Point

The push for connected health is well past the “nice-to-have” stage. It’s now a full-blown market and regulatory imperative. The healthcare interoperability solutions market was valued at around $6.09 billion in 2024 and is expected to surge to $10.49 billion by 2030.

This explosive growth isn’t a fluke. It’s a direct response to the widespread use of electronic health records (EHRs) and new government rules that mandate standardized, open APIs. You can explore more about the market projections to see just how urgent this shift has become.

For any healthcare organization, this is a critical moment. Sticking with siloed, outdated systems is no longer just inefficient; it’s a risk to compliance, financial health, and most importantly, patient safety.

Successfully navigating this change demands a healthtech software development partner who deeply understands both the technical code and the regulatory complexities. This guide is your roadmap to building the connected, intelligent, and patient-first healthcare systems the future requires.

Learning the Language of Health Data

To get different healthcare systems talking to each other, you have to realize they all speak different languages. It’s a bit like a conversation where one person communicates exclusively through structured faxes, another sends formal legal documents, and a third uses modern text messages. To get anything done, you have to become a translator.

Building effective healthcare interoperability software means mastering these data languages, each with its own history, grammar, and purpose.

The Old Guard: HL7v2, CDA, and DICOM

The evolution of these standards mirrors the evolution of technology itself. Each was created to solve a specific problem for its time, and they’re still deeply embedded in hospitals everywhere.

-

HL7v2 (Health Level Seven Version 2): For decades, this has been the workhorse of healthcare. Think of it as a highly structured digital fax. It sends packets of text-based information, like lab results or admission notices, in a rigid, pipe-and-hat (| and ^) format. It’s incredibly reliable for what it does, but it’s a pain to work with and wasn’t built for the web.

-

CDA (Clinical Document Architecture): This is like a formal, unchangeable PDF of a patient’s clinical summary. It’s perfect for creating a snapshot in time, like a discharge summary or referral note. The downside? It’s a static document, not a source of live, queryable data.

-

DICOM (Digital Imaging and Communications in Medicine): As the name suggests, DICOM is the universal language for medical images. It’s the standard that lets an MRI machine talk to a radiologist’s workstation, bundling the image itself with critical patient metadata. It’s indispensable.

These older standards aren’t going away anytime soon. The real challenge is bridging them with modern applications.

FHIR: A Modern Dialect for a Connected World

This is where FHIR (Fast Healthcare Interoperability Resources) completely changes the game. FHIR was designed from the ground up for the internet.

If HL7v2 is a structured fax, FHIR is a modern REST API. It treats health information not as monolithic documents, but as small, modular blocks of data called “Resources”, like Patient, Observation, or Medication.

This shift to a resource-based model is what makes all the difference. Developers using familiar web technologies can now ask for just the slice of data they need. Instead of parsing a huge, complex document to find a patient’s allergies, you just make an API call for the “AllergyIntolerance” resource.

This flexibility makes FHIR the ideal foundation for the responsive web and mobile apps that patients and clinicians now expect. As we explored in our guide on the fundamentals of healthcare data engineering, this resource-based approach is key to building modern health applications.

The reality of modern custom healthcare software development isn’t about ripping and replacing old systems. It’s about building smart translation layers on top of them – solutions that can “speak” both HL7v2 and FHIR, connecting legacy infrastructure to the future.

This need is driven by healthcare providers, who are grappling with more patients and a desperate need for better data access. As the primary end-users, they are projected to command between 44.8% and 55% of the interoperability market share by 2026. A huge chunk of this market, 43.7% globally, is focused on the essential work of bridging legacy systems with modern FHIR APIs. This is a direct response to the massive costs, billions annually, caused by disconnected data silos. For a closer look at these market forces, you can read the full analysis on healthcare interoperability solutions.

Ultimately, you have to pick the right tool for the job. For archival documents, CDA is still a solid choice. For medical imaging, DICOM is irreplaceable. But if you’re building the connected, interactive health applications of tomorrow, FHIR is the fluent, flexible language you need to master.

Building an Interoperability Architecture That Lasts

Alright, we’ve talked about the concepts. Now it’s time to roll up our sleeves and talk about the blueprint. When you’re building a healthcare interoperability solution, you’re not just connecting a few systems. You’re designing the central nervous system for your health data – a system that needs to be tough, flexible, and ready for whatever the future throws at it.

This is where your architectural choices become make-or-break. Get it right, and you have a scalable, maintainable system. Get it wrong, and you’re looking at a brittle, expensive mess.

The architecture you land on will define how your entire system scales, how easy it is to maintain, and how secure it can be. The goal is to build something that won’t buckle under the pressure of new standards or ever-increasing data volumes.

Choosing Your Architectural Pattern

In the world of interoperability, two major patterns tend to dominate the conversation: the Enterprise Service Bus (ESB) and the API Gateway. They each have their place, and picking the right one depends entirely on the job you need to do.

An Enterprise Service Bus (ESB) is the heavyweight champion of complex, internal integration. Think of it as a central hub that can handle sophisticated message routing, transformation, and orchestration. It’s especially powerful in legacy environments where you’re dealing with a mix of old and new, like translating HL7v2 messages from a lab system into modern FHIR resources for a new app.

The API Gateway, on the other hand, is a much leaner, more modern approach. It acts as a single, secure front door for all your API requests. It’s brilliant at handling things like authentication, rate limiting, and routing requests to the right backend microservice. If you’re building a cloud-native platform and exposing FHIR APIs to mobile apps or partners, an API Gateway is your best friend.

Here’s a simple way to think about it: Is your main challenge orchestrating complex internal workflows with lots of different formats? You might need an ESB. Is your focus on exposing streamlined, secure data access to the outside world? An API Gateway is probably your answer. In reality, many modern systems end up using a hybrid of both.

Ultimately, your architecture has to deliver on the core promise of seamless data flow. To do that, you need to understand not just the technology but the real-world systems you’ll be connecting to. Digging into the specifics of how major EHRs work, for instance, by reviewing documentation on Epic integrations, will give you a crucial dose of reality about what it takes to connect.

Designing APIs That Don’t Break

With your architectural pattern chosen, it’s time to focus on the APIs themselves – the actual workers that move the data. In healthcare, this means building FHIR-compliant APIs that are both secure and built to last.

Here are a few non-negotiable principles I’ve learned over the years:

-

Stick to the FHIR Script: Don’t get creative and invent your own data models. Use the standardized FHIR resources like

Patient,Observation, andEncounter. This is the lingua franca of modern health data, and using it ensures other systems can understand you right out of the box. -

Lock Down Access: Use modern, robust standards like OAuth 2.0 and OpenID Connect. The SMART on FHIR framework is purpose-built for this, ensuring that only properly authorized users and applications can even get a peek at protected health information (PHI).

-

Version Your APIs From Day One: Trust me on this. Standards will change, your product will evolve, and you will need to update your APIs. Plan for it from the beginning with a clear versioning strategy (e.g.,

api/v1/patient). This prevents you from breaking existing integrations every time you make a change.

Tackling the Data Translation Headache

Here’s where many projects hit a wall: translating data between different standards. A hospital’s old lab system might be happily spitting out data in HL7v2, but your shiny new patient portal only speaks FHIR. This is where a dedicated data mapping and transformation layer saves the day.

Think of this layer as your system’s universal translator. It has a very specific job:

-

Parse the incoming data from its original format (like an HL7v2 pipe-and-hat message).

-

Map the fields from the old standard to their correct new home in a FHIR resource.

-

Transform the data itself to fit the structure, data types, and rules of the new FHIR standard.

-

Validate the final FHIR resource to make sure it’s compliant and ready to be used.

Building this translation engine requires a deep, practical understanding of both the source and target standards. We touch on some of these challenges in our guide on building scalable HealthTech platforms. Without a smart strategy for data governance and translation, your interoperability solution will quickly become a collection of broken pipes and dead ends.

Integrating Security and Compliance from Day One

When we talk about healthcare data, security isn’t just a feature; it’s the foundation on which everything else is built. Exchanging patient information without bulletproof security isn’t just a technical misstep; it’s a disaster waiting to happen. For any team building interoperability software, security can’t be bolted on at the end. It has to be baked in from the very first line of code and influence every single architectural choice.

This philosophy is what we call “security by design.” It transforms compliance from a last-minute scramble into a continuous, deliberate process. Ultimately, you’re not just building software; you’re building trust, which is the most valuable currency when handling Protected Health Information (PHI).

Beyond the Alphabet Soup of Regulations

You’ve heard of HIPAA in the U.S. and GDPR in Europe. It’s easy to see them as bureaucratic headaches, but I encourage you to view them as blueprints for building software people can trust. They tell you what needs protecting and what rights patients have. Your job is to design an architecture that delivers the how.

This means you can’t just check a box and call it compliant. You have to implement technical controls that can withstand actual attacks. Think of these regulations as the absolute minimum – the starting line, not the finish. As we’ve detailed in our guide on developing HIPAA-compliant software, genuine security is a cultural mindset that should infuse the entire development lifecycle.

A crucial part of this mindset is making Secure Code Reviews a non-negotiable part of your workflow. Finding a vulnerability early in the process is a quick fix; finding it in a live environment is a five-alarm fire. Our cyber compliance solutions are designed to embed this rigor from the start.

Core Security Pillars for Interoperability

A secure system is a layered one. You need a defense-in-depth strategy. Here are the absolute must-haves for any interoperability project.

-

Robust Access Controls: You need to be able to answer, with 100% certainty, “Who is touching this data, and do they have permission?” This is where standards like SMART on FHIR become invaluable. It uses OAuth 2.0 and OpenID Connect to manage who can log in and what they’re allowed to see, ensuring only verified users with explicit consent can access patient data.

-

End-to-End Encryption: Data has to be unreadable to anyone who shouldn’t see it, period. That means encrypting data in transit (while it’s moving between systems) with protocols like TLS 1.2+ and at rest (while it’s sitting in a database or file server). There’s simply no excuse for leaving PHI exposed at any point.

-

Comprehensive Audit Trails: If the worst happens and a breach occurs, you need a black box recorder. Your system must log every single action: every view, every edit, every creation, every deletion of PHI. These audit logs are your first and best tool for forensic analysis and proving compliance after the fact.

-

Effective Consent Management: At the end of the day, it’s the patient’s data. Modern interoperability is built on their trust. Your software must give patients clear, straightforward ways to grant and revoke access to their health information for specific providers or purposes. This isn’t just a nice-to-have; it’s a fundamental requirement of both GDPR and patient-centered care.

Security isn’t a feature; it’s a prerequisite. In healthcare, a system that isn’t secure isn’t interoperable; it’s just a liability. Every API endpoint is a potential doorway into sensitive data, and it must be guarded accordingly.

The Value of Certifications

While strong internal practices are essential, external validation is what builds confidence with partners and customers. Pursuing certifications like SOC 2 or HITRUST isn’t about collecting badges; it’s about putting your security posture to the test against rigorous, universally respected standards.

These certifications force you to prove that your controls, processes, and infrastructure have been audited and verified by an objective third party. Committing to these standards from the project’s inception significantly de-risks your investment and sends a powerful message to the market: we take security seriously.

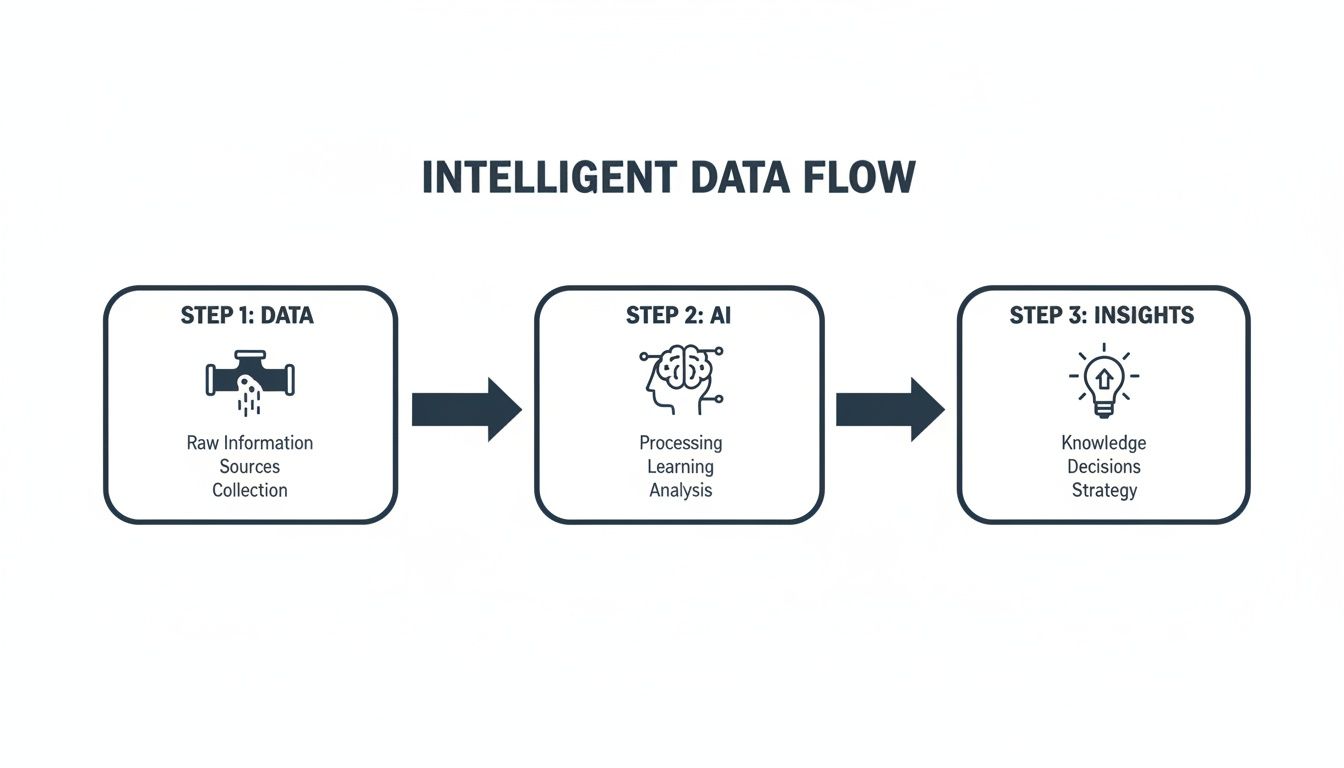

Making Connected Data Intelligent with AI

Once you’ve done the hard work of getting your data to flow freely between systems, you can finally ask the most important question: “What’s next?” The real goal of healthcare interoperability software development isn’t just to connect the pipes; it’s to make the information flowing through them genuinely useful. This is where artificial intelligence comes in, turning your connected data from a passive archive into an active, intelligent asset.

By layering AI onto these newly integrated data streams, you start to see things you couldn’t before: subtle patterns, future risks, and opportunities for automation. You shift from just collecting data to actually understanding it.

Putting Your Connected Data to Work

Think of it this way: interoperability builds the superhighway for data. AI provides the intelligence to manage the traffic, predict congestion, and guide every vehicle to the right destination, faster and safer.

-

Translating Clinical Narratives: A massive amount of critical patient information is buried in unstructured text, like a physician’s clinical notes or a discharge summary. AI-powered Natural Language Processing (NLP) acts as a universal translator, reading human language and converting it into structured, analyzable data. For instance, an NLP model can scan thousands of notes, identify every mention of “shortness of breath,” and automatically map it to the correct SNOMED CT code.

-

Forecasting Risk to Prevent Crises: When you can pull together data from EHRs, lab reports, and even patient wearables, you have a powerful foundation for predictive analytics. Machine learning models can analyze this comprehensive data to spot patients at high risk for sepsis, a potential hospital readmission, or the onset of diabetes. This gives care teams a crucial head start, allowing them to step in long before a situation becomes critical.

The real value of interoperability isn’t just having a complete patient record. It’s using that complete record to predict what will happen next and changing the outcome for the better.

Building these predictive tools is a specialized skill. Partnering with expert AI development services is often the key to turning your historical data into a reliable crystal ball for future patient care.

How AI Makes Interoperability Itself Smarter

AI doesn’t just sit on top of your interoperability solution; it can be woven directly into the framework to make the whole system more efficient and robust.

Automating Data Mapping and Quality Control

One of the most persistent headaches in data integration is semantic mapping – ensuring “systolic BP” from System A is understood as the exact same concept as “Systolic Blood Pressure” from System B. Traditional rule-based engines can handle this, but they are notoriously brittle and a nightmare to maintain.

This is a perfect job for machine learning. An AI model can learn the complex relationships between different medical terminologies and coding systems on its own. It can then suggest or even perform data mapping with remarkable accuracy. This dramatically cuts down on the manual labor needed to onboard a new data source and helps keep the data flowing through your pipes clean and trustworthy.

A Second Set of Eyes for Clinicians

Once your data is connected and clean, AI can become an invaluable partner for your clinical staff. Imagine an AI algorithm sifting through thousands of DICOM images from your PACS, flagging a handful of scans with subtle anomalies that might indicate a high-risk condition. This doesn’t replace the radiologist; it helps them prioritize their work, focusing their expertise on the most urgent cases first.

This powerful combination of connected data and intelligent analysis is exactly what leaders are exploring when they look into what AI for your business can do. The point isn’t to replace human expertise, but to augment it with timely, data-driven insights delivered right at the point of care. As we outline in our AI transformation framework, integrating AI is a central goal for any modern healthtech project.

Your Development Roadmap from Concept to Launch

So, you have an idea for a new healthcare interoperability solution. Now what? Taking that concept and turning it into a real, working product is a marathon, not a sprint. This roadmap lays out the essential phases, guiding your healthcare interoperability software development from the back-of-a-napkin sketch to a successful launch. When you’re dealing with something as sensitive and complex as health data, a disciplined approach isn’t just nice to have; it’s everything.

The journey starts with discovery, flows into development, and ends with a system that can be deployed and scaled. The key is to stay flexible. Adopting agile principles allows your team to tackle challenges as they arise and show real progress along the way, which is vital in a field where technology and regulations never stand still.

The Phased Approach to Building Interoperability

Every project has its own quirks, but successful builds almost always follow a logical path. Think of these stages as a reliable framework for navigating the inherent complexity and risk.

-

Phase 1: Discovery and Strategy

Before you write a single line of code, you need to understand the “why.” This phase is all about deep-diving with stakeholders: clinicians, administrators, and IT staff, to map out their current workflows and pinpoint their biggest data-sharing headaches. The goal is to walk away with a rock-solid project scope, a proposed technology stack, and a high-level architectural plan. This is often where expert guidance from digital transformation consulting can make a huge difference. -

Phase 2: Design and Prototyping

With your strategy set, it’s time to draw the blueprints. On one side, UX/UI designers will create wireframes and mockups to ensure the system is intuitive for its users. On the other hand, your architects will design the technical backbone, defining API contracts and data models. This is where you’ll make critical decisions, like whether an API Gateway or a more traditional Enterprise Service Bus (ESB) makes more sense for your integration needs. -

Phase 3: Agile Development

This is where the real build work happens, broken down into manageable, iterative sprints. For an interoperability project, a typical sprint might involve building a translation layer to convert HL7v2 messages into FHIR resources, developing secure API endpoints, or coding the core business logic. Having a dedicated development team that lives and breathes healthtech isn’t just helpful; it can be a massive accelerator.

The Unique Challenges of Testing and Validation

Testing an interoperability solution is a whole different ballgame compared to standard software QA. You aren’t just looking for bugs in your own code. You’re trying to prove that your system can reliably and securely “talk” to a whole universe of other systems you don’t control.

The real test of interoperability is not whether your software works, but whether it works with everything else. Validation against industry standards isn’t optional; it’s the core measure of success.

This requires specialized tools and environments. You can’t just wing it. Testing sandboxes are non-negotiable. For example, the Inferno Framework for FHIR lets you test your application against a certified reference implementation. This allows you to validate every part of your build against specific profiles and guides without ever putting real patient data at risk. Only after this rigorous process can you be confident your solution is ready for the real world.

Deployment, Scaling, and Intelligent Operation

Once your solution is built and validated, it needs a place to live. A cloud-native deployment on a platform like AWS, Azure, or Google Cloud is often the best choice, giving you the scalability and resilience to handle unpredictable data volumes. That said, some healthcare organizations will have strict data sovereignty or security policies that demand an on-premise deployment, so it’s crucial to plan for that possibility.

As your system gets up and running, the goal shifts from just connecting data to making it smart. This is where the real value emerges.

This process shows how raw, connected data can be transformed into a strategic asset. By feeding clean, interoperable data into AI and machine learning models, you can uncover clinical and operational insights that were impossible to see before. Pairing solid product engineering services with this kind of forward-thinking roadmap ensures your product not only gets to market but is built to last.

Frequently Asked Questions (FAQ)

When you start digging into a healthcare interoperability project, a lot of questions bubble up for everyone, from the C-suite to the development team. Let’s tackle some of the most common ones I hear in the field to give you some clarity.

What is the biggest challenge in healthcare interoperability software development?

Honestly, the biggest hurdle isn’t technical; it’s semantic. Getting two systems to talk to each other with an API is the easy part. The real challenge is making sure they understand each other perfectly.

For example, when one EHR sends a “blood pressure” reading, does the receiving system interpret it in the exact same way, with the same units and context? This is where mastering complex standards like FHIR and enforcing strict data governance becomes non-negotiable. It’s about creating a shared language, not just building a bridge.

Should we build our own interoperability solution or buy a pre-built one?

This is the classic “build vs. buy” dilemma, and there’s no single right answer. It really hinges on what your organization needs. Off-the-shelf platforms can get you up and running quickly, but you might find yourself hitting a wall when you need to connect to a legacy system or support a unique clinical workflow.

On the other hand, going the custom healthcare software development route gives you a solution that fits like a glove. The trade-off is that it demands deep expertise in healthtech standards and compliance. We often see a hybrid approach work best: use a solid platform as your foundation and then bring in a skilled partner to build out the custom pieces you need.

The choice isn’t just about build vs. buy; it’s about control vs. speed. A custom build gives you total control over the roadmap and intellectual property, which can be a major long-term advantage.

How much does building an interoperability solution cost?

The cost can swing dramatically depending on what you’re trying to achieve. A straightforward project to connect two internal systems might start in the tens of thousands.

If you’re aiming for an enterprise-grade platform that has to shake hands with multiple external partners and includes advanced features from our AI development services, you’re likely looking at a budget in the hundreds of thousands or even more. The most important step is a thorough discovery phase at the very beginning. Nailing down the scope upfront is the best way to prevent budget surprises later on.

How does FHIR improve on older standards like HL7v2?

Think of HL7v2 as an old, cryptic fax. It’s a rigid, text-based message that’s a nightmare to parse and almost impossible to modify without breaking something. It worked for its time, but it wasn’t built for the modern web.

FHIR (Fast Healthcare Interoperability Resources) is the complete opposite. It’s a modern, web-native standard built on RESTful APIs. It cleverly defines data as modular “resources”: like Patient, Observation, or Medication. This structure makes FHIR incredibly flexible and a dream for developers to work with. It’s why FHIR is the engine behind so many modern mobile and web health apps that need instant access to data, and it’s a cornerstone of the custom software development projects we’re building today, as seen in many of our client cases.

Ready to build the future of connected healthcare? The path to effective interoperability requires a partner who understands the technology, the regulations, and your unique business goals. Bridge Global is a dedicated healthtech software development partner with a proven track record of delivering secure, scalable, and intelligent solutions.