Secure Healthcare Software Architecture: An Essential Guide

Healthcare software is one of the few domains where a bad architectural decision can trigger a security incident, a compliance failure, operational disruption, and patient distrust at the same time. That is why secure healthcare software architecture has to be treated as a board-level design concern, not a late-stage engineering task.

The teams that get this right do not start with a checklist. They start with system boundaries, data flows, identity rules, and operational controls. In practice, that means deciding early how Protected Health Information moves, where it can appear, who can request it, how every access is verified, and which parts of the platform are allowed to fail without exposing data.

The High Stakes of Healthcare Software Security

Healthcare has remained the most breached industry globally for over a decade, with the average cost per breach reaching a very high figure. A 2023 industry report also found that 75% of healthcare organizations experienced a cyberattack (techexactly.com). Those figures change the conversation immediately. Security is not an enhancement layer. It is part of business viability.

Leaders often frame the problem too narrowly. They ask whether their app is encrypted or whether access is role-based. Those controls matter, but breaches usually expose broader architectural weaknesses. Data appears in places nobody intended. Privileges expand over time. Logs become more sensitive than the database they were meant to protect.

That is why experienced teams bring in a healthtech software development partner early, before product, platform, and compliance decisions drift apart. Strong architecture protects more than records. It protects uptime, procurement readiness, audit readiness, and trust with clinicians, patients, and enterprise buyers.

For teams looking to ground strategy in practical operational habits, essential data security in healthcare is useful because it reinforces a point many programs miss: healthcare security depends on day-to-day execution just as much as policy.

Key takeaway: In healthcare, insecure architecture is not just a technical debt item. It becomes financial exposure, delivery friction, and reputational risk.

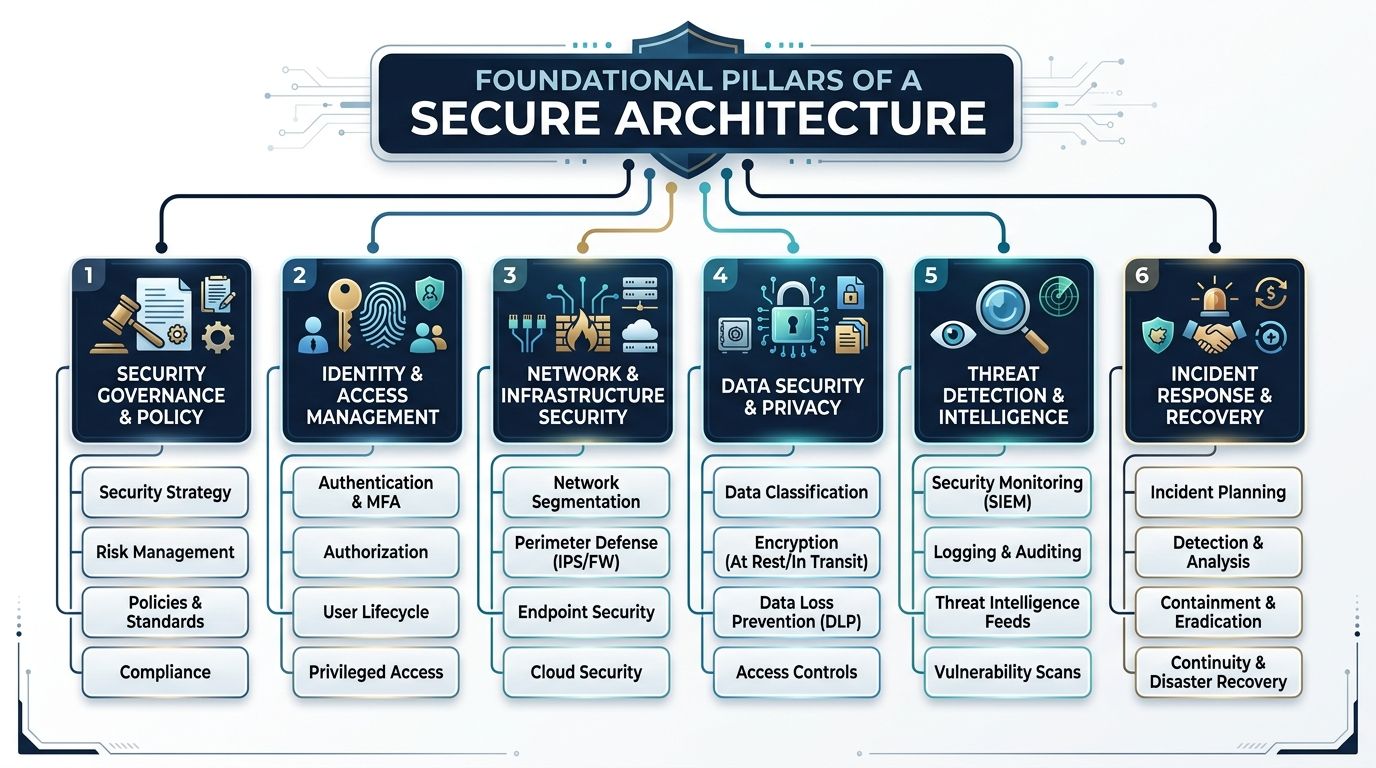

Foundational Pillars of a Secure Architecture

Good security programs in healthcare usually become readable once you reduce them to three architectural positions: Zero Trust, Defense in Depth, and Security by Design. If one of these is missing, the system usually compensates with manual controls, exceptions, and fragile workarounds.

Successful custom healthcare software development starts from these principles, not from frameworks pasted into a backlog after the platform is already taking shape.

Zero Trust means every request earns access

A zero-trust security model enforces per-request authorization using contextual signals, and retrofitting zero-trust after deployment increases implementation costs by 300-400% while creating compliance gaps (accountablehq.com).

That matters because healthcare environments rarely stay simple. A user may be legitimate, but on an unmanaged device. A clinician may have the right role but be requesting data outside the expected workflow. A service account may be valid but behave abnormally.

Zero Trust changes the default answer from “allowed unless blocked” to “blocked unless proven safe in context.”

Defense in Depth prevents single-point failure

Think of this like concentric protection rings around PHI. One failed control should not expose the whole environment.

A practical layered model usually includes:

-

Network controls: Segmented environments, private subnets, tightly scoped ingress, and service-to-service restrictions.

-

Application controls: Input validation, secure session handling, API authorization, rate limits, and strong error handling.

-

Data controls: Encryption, tokenization where appropriate, masking, retention rules, and isolated backups.

-

Operational controls: Monitoring, alerting, incident runbooks, dependency review, and infrastructure change governance.

A common failure pattern is relying too heavily on one layer, usually perimeter filtering or database encryption. Attackers do not care which control you invested in. They care about what control you forgot.

Security by Design removes expensive rework

Security by Design means architecture reviews happen before implementation choices harden into dependencies. Teams classify data before creating analytics pipelines. They define trust boundaries before exposing APIs. They decide which systems may process PHI before vendors are embedded in the workflow.

What does not work is the opposite approach:

| Late security pattern | Real consequence |

|---|---|

| Add access rules after feature delivery | Privilege sprawl and inconsistent enforcement |

| Encrypt storage only | PHI still leaks through logs, exports, and tooling |

| Review vendors after integration | Contract, telemetry, and data handling surprises |

| Treat audit logging as a reporting task | Missing forensic evidence during incidents |

Architect’s rule: If a control is expensive to add later, it probably belongs in architecture, not in remediation.

Architecting the Complete Secure Data Lifecycle

Many healthcare teams still equate data security with encrypted databases. That is not enough. PHI rarely sits still. It moves through forms, APIs, background jobs, event buses, search indexes, support tools, analytics dashboards, and incident telemetry. Every hop is part of the attack surface.

A critical vulnerability appears when PHI traverses application logs, error payloads, and crash reporting tools. This kind of secondary system leakage represents 40-60% of preventable breaches in enterprise environments.

That one fact explains why many otherwise mature systems still fail audits and incident reviews. The primary database may be well protected, while observability tooling accumulates sensitive data in plain sight.

Follow one patient record across the stack

Take a simple patient intake event.

A patient submits symptoms through a web or mobile form. The application validates input and sends it to an API. The API stores structured fields, triggers downstream workflows, writes audit events, and may call external services for notifications, care routing, or analytics.

The security question is not “is the database encrypted?” The core question is “where else did that same PHI appear?”

Common exposure points include:

-

Application logs: Request bodies, debug traces, stack traces, and serialized objects

-

Error payloads: Validation failures that echo submitted content back into monitoring systems

-

Crash reporters: Session and event context attached automatically by SDKs

-

Analytics tools: User property tracking, event labels, and screen recordings

-

Search and cache layers: Replicated payloads copied for speed, not governance

-

Support tooling: Admin consoles, ticket exports, and screenshots shared during troubleshooting

Effective Design patterns

The most reliable answer is to build data path control into the architecture itself.

Use a combination of these patterns:

-

Classify PHI at ingress

Label sensitive fields when data enters the system, not after storage. That allows downstream services to apply redaction and policy consistently. -

Redact before observability

Logging libraries, APM agents, and error handlers should remove or mask protected fields before data leaves the application process. -

Separate PHI-bearing services

Services that process protected data should not share unrestricted telemetry pipelines with non-sensitive systems. -

Constrain third-party SDKs

Session replay, product analytics, and customer support widgets need explicit review. If they are not architected for PHI-safe use, keep them outside protected workflows. -

Treat exports as first-class architecture

CSV generation, reporting pipelines, and scheduled extracts often bypass the same controls applied to user-facing apps.

Database encryption is necessary, but incomplete

Teams often implement strong storage security and still remain exposed because developers, DevOps engineers, or vendors can see the same sensitive data in tools surrounding the core product.

That is why mature cyber compliance solutions focus on the full path, not just the repository. The same principle applies to modern custom software development. Security has to govern data creation, transformation, observation, retention, and deletion.

Practical test: Pick one PHI field, trace it from user input to deletion, and list every system that touches it. Most hidden risks appear in the systems people forget to include.

A short checklist for architecture reviews

Before approving a healthcare release, ask:

-

Which services can receive raw PHI

-

Which logs can capture request or response bodies

-

Which vendors receive diagnostics or user behavior data

-

Which caches, queues, and replicas store sensitive payloads

-

Which teams can access production support data

-

Which deletion process removes PHI from secondary systems

If those answers are incomplete, the architecture is incomplete.

Identity Management and Secure Cloud Patterns

Identity is where most secure healthcare software architecture becomes either resilient or brittle. Perimeter-based assumptions break quickly in healthcare because the environment is mixed by design. Clinical systems remain on-premises. Patient-facing products often run in the cloud. Enterprise identity may sit in one provider while vendors, contractors, and devices enter through different paths.

The result is not just complexity. It is inconsistent. And inconsistency is what attackers exploit.

A 2024 Gartner report indicates that 45% of healthcare apps fail to scale due to poor architecture, and cloud complexity makes the problem worse because misconfigurations and inconsistent identity policies create major attack surfaces.

RBAC is the start, not the finish

Role-Based Access Control is still useful. It keeps entitlements understandable. It maps well to clinical and administrative job functions. It satisfies basic separation-of-duty needs.

But RBAC alone struggles with modern healthcare realities:

-

A clinician may need temporary access to a patient outside their usual panel.

-

A contractor may have the right role, but only from managed devices.

-

An internal admin may need powerful access that should never work from public networks.

-

A machine identity may be valid only for one service, one environment, and one narrow action.

That is why strong platforms layer contextual policies on top of roles. Access decisions should consider identity, device posture, network context, environment, resource sensitivity, and current risk signals.

On-prem, cloud, and hybrid each fail differently

| Deployment model | Strength | Typical security weakness |

|---|---|---|

| On-prem | Strong local control over legacy clinical systems | Flat internal trust and older authentication patterns |

| Public cloud | Better automation, policy-as-code, and elastic scale | Misconfigured IAM, storage, networking, and secrets handling |

| Hybrid | Practical for real healthcare environments | Identity drift between old and new systems |

Hybrid is often the answer in healthcare, but it only works if identity policy is centralized while enforcement remains local to each trust boundary.

Secure patterns worth standardizing

Teams should normalize a few controls across every environment:

-

SSO as the default entry point: Reduce password sprawl and centralize identity lifecycle.

-

MFA for privileged and sensitive workflows: Especially for administrative paths, remote access, and patient data exports.

-

Short-lived credentials for services: Avoid static secrets where token-based or workload identity options exist.

-

Policy enforcement at multiple layers: Gateway, application, and resource level.

-

Environment isolation: Development, staging, and production should not share broad trust by convenience.

For organizations dealing with multi-cloud or hybrid rollout decisions, this guide on a cloud strategy consultant is useful because cloud security architecture usually fails at the strategy layer before it fails in code.

What works in practice

The most effective identity model is boring by design. It minimizes exceptions, removes local user stores where possible, and makes privileged actions visible.

A dedicated development team can help operationalize that consistency across cloud services, internal admin tooling, APIs, and deployment environments. The hard part is rarely implementing login. The hard part is maintaining one coherent authorization model as the platform grows.

Rule of thumb: If two environments answer the same access request differently for no deliberate reason, the architecture will eventually drift into a security problem.

Proactive Defense Through Threat Modeling and Secure AI

Reactive security is expensive because it starts after the architecture has already made risky assumptions. Threat modeling is cheaper because it challenges those assumptions while change is still easy.

In healthcare systems, threat modeling should happen before major workflow decisions solidify. Not after release planning. Not after pen testing. At design time.

Threat modeling makes hidden risk visible

A useful workshop does not need to be academic. It needs to answer plain questions:

-

What assets matter most

-

Where trust boundaries exist

-

Which actors can abuse the system

-

Which controls fail open

-

Which third parties inherit risk

Methods like STRIDE are valuable because they force teams to consider spoofing, tampering, information disclosure, and privilege abuse in a structured way. These methods expose product decisions that create security debt before a single line of implementation cements them.

A common example is an internal admin console. Teams often model it as “staff-only” and move on. Threat modeling usually reveals the essential questions: Which staff, from what devices, through which network paths, with what audit trail, and with what emergency override process?

AI systems add a second security plane

Healthcare AI introduces risks beyond ordinary web application concerns. The model, the prompt path, the retrieval path, and the inference logs all become part of the protected architecture.

Secure AI design usually requires controls such as:

-

Trusted data pipelines: Training and inference data should be curated, access-controlled, and reviewed for PHI handling.

-

Inference boundary controls: Prompt content, retrieved records, and generated output all need policy checks.

-

Model access governance: Not every internal user or service should call every model endpoint.

-

Output review points: Clinical workflows cannot treat model output as safe or complete.

-

Auditability: Teams need traceability for prompts, retrieval logic, user actions, and downstream decisions.

As explored in our guide to healthcare AI, the safest healthcare AI architectures behave like controlled clinical systems, not generic chatbot wrappers.

What does not work

The weakest pattern is bolting AI onto an existing product with no new trust model. That usually creates hidden PHI exposure in prompts, vendor routing, or inference telemetry.

The stronger pattern is to treat AI as a governed subsystem with explicit data contracts, scoped access, and human review where decisions affect care or sensitive operations.

Organizations investing in AI development services should insist on that level of discipline. The same standard applies when planning AI for your business. If the AI layer is powerful enough to touch sensitive workflows, it is powerful enough to introduce new failure modes.

Practical stance: Do not ask only whether the model is accurate. Ask where it gets data, where prompts are stored, who can invoke it, and how unsafe output is contained.

Operationalizing Security with DevSecOps

Security fails in healthcare when it depends on heroics. One careful architect, one diligent reviewer, or one security champion cannot protect a platform that ships continuously. The control plane has to live inside delivery.

That is what DevSecOps means in practice. Security checks run in the same pipelines that compile, test, package, and deploy software.

A practical pipeline shape

A workable healthcare pipeline usually combines several control types instead of relying on a single scanner.

-

SAST for code flaws: Catch insecure patterns in application logic before runtime.

-

SCA for dependencies: Surface risk from open-source libraries and transitive packages.

-

Container and IaC scanning: Review images, Kubernetes manifests, and Terraform or similar configuration for insecure defaults.

-

DAST against running builds: Test application behavior, routing, and exposure in deployed environments.

-

Secret detection: Prevent tokens, keys, and credentials from entering source control or artifacts.

This approach fits naturally into product engineering services because it treats security as an engineering system, not a separate approval queue.

API security is where many programs stall

APIs are often the weakest link in healthcare software, yet there is limited guidance on the operational cost or risk quantification needed to secure them, and much of the advice assumes mature IAM already exists.

That gap is real. Many teams know they need API gateways, authentication, and request validation. Fewer teams have decided:

-

who owns API inventory,

-

who reviews schema changes,

-

who approves third-party integrations,

-

who monitors token misuse,

-

and who blocks unsafe endpoints before release.

The culture piece matters

Tools alone do not create DevSecOps. Teams need working agreements.

A healthy model often includes:

| Team role | Security responsibility |

|---|---|

| Product owner | Defines sensitive workflows and data exposure boundaries |

| Engineers | Build secure defaults and remediate findings in sprint flow |

| Platform team | Maintains CI/CD controls, secrets handling, and environment policy |

| Security team | Sets standards, reviews exceptions, and validates critical risk areas |

Bridge Global can support this through AI-assisted delivery workflows that include risk detection during build and release, alongside broader cyber compliance solutions for healthcare environments.

The important point is simpler than the tooling stack. If security findings appear only after release candidates are frozen, the architecture is already working against the team.

Ensuring Compliance and Safe Interoperability

Compliance in healthcare is often treated as a documentation problem. It is really an architectural outcome. If a platform handles identity well, constrains data flow, logs the right events, and isolates sensitive services, many compliance requirements become demonstrable rather than aspirational.

Compliance should map to engineering controls

HIPAA, GDPR, and enterprise procurement reviews all push teams toward the same core patterns:

-

Access controls that enforce least privilege

-

Audit trails that support investigation and accountability

-

Encryption and data protection across storage and transit

-

Risk analysis and reviewability for changing systems

-

Vendor governance where third parties touch protected workflows

The engineering question is not “are we compliant?” It is “which architectural controls prove we are operating safely?”

As we explored in our guide to HIPAA-compliant software development, teams usually struggle most when governance and architecture evolve separately.

Interoperability expands both value and exposure

FHIR-based integration is now central to many healthcare platforms because it improves structured exchange across systems. But interoperability also widens the threat surface.

Secure patterns for interoperability generally include:

-

OAuth 2.0 and SMART on FHIR aligned authorization

-

Scoped tokens with narrow data permissions

-

Rate limiting and monitoring at integration boundaries

-

Strict validation of inbound and outbound resource handling

-

Thorough audit logging around API-mediated data access

A useful reminder comes from infrastructure thinking. Work on designing the N3 NHS Network highlights how foundational network design shapes later resilience. The same principle applies to healthcare interoperability. If connectivity is added without trust boundaries, secure exchange becomes difficult to enforce.

Logs must be useful and protected

Healthcare teams need logs for forensics, compliance evidence, operational troubleshooting, and abnormal access detection. But logs can become their own liability if they capture too much sensitive context or if access to them is too broad.

A safer model is to log events, identifiers, decisions, and metadata needed for audit, while keeping raw PHI out of observability systems unless there is a tightly controlled reason.

This is also where digital transformation consulting and an AI transformation framework should stay grounded in operational realities. Transformation does not reduce compliance pressure. It multiplies the need for disciplined system design.

Conclusion: Your Architectural Path Forward

Secure healthcare software architecture is not something teams finish. It is something they maintain through design discipline, validation, and controlled change.

The essential requirements are clear. Build on Zero Trust, apply Defense in Depth, and practice Security by Design before delivery starts. Protect the full PHI lifecycle, not just the database. Make identity contextual. Treat AI as a governed subsystem. Embed security into CI/CD so controls scale with release velocity.

Organizations that want a practical route forward should compare architecture decisions against real delivery constraints, internal capability, and compliance exposure, then review proven client cases before choosing an implementation path.

Frequently Asked Questions

What is the biggest mistake in secure healthcare software architecture?

The biggest mistake is protecting storage but ignoring the full data path. Many teams encrypt the database and feel finished, while PHI still appears in logs, error traces, analytics tools, support exports, or third-party SDKs. In practice, those secondary systems create some of the most avoidable exposure.

Is RBAC enough for healthcare applications?

No. RBAC is necessary, but it is usually not sufficient on its own. Healthcare environments need contextual access decisions based on device, workflow, location, sensitivity, and current risk posture. Roles answer who a user is supposed to be. Context helps answer whether a specific request should be allowed now.

When should threat modeling happen?

Threat modeling should happen before the implementation of major workflows, integrations, and admin capabilities. It is most effective during discovery, solution design, and architecture review. If teams wait until testing, they often find design flaws that require expensive rework.

How should teams think about cloud security in healthcare?

They should think in terms of shared responsibility and policy consistency. Cloud providers can supply secure building blocks, but application teams still own identity rules, data handling, secrets management, logging design, and environment isolation. Hybrid environments add another challenge because legacy and cloud systems often enforce trust differently.

What makes healthcare API security difficult?

The difficult part is not only authentication. The primary challenge is operating API security across multiple vendors, legacy systems, internal services, changing schemas, and uneven IAM maturity. Teams need governance for inventory, versioning, review, monitoring, and deprecation, not just an API gateway.

How do AI features change healthcare architecture?

AI features add new data flows, new logging risks, new vendor questions, and new review requirements. Prompts, retrieval pipelines, model endpoints, and outputs all become part of the secure architecture. Teams should treat AI components as controlled subsystems with explicit data boundaries and auditability.

What should a healthcare architecture review include?

A useful review should cover data classification, trust boundaries, identity and authorization patterns, third-party integrations, logging design, API exposure, environment segregation, incident response readiness, and deletion or retention paths for PHI. It should also test whether the documented design matches how the platform runs.

If your organization is planning a new healthcare platform, modernizing a legacy product, or adding AI to a sensitive clinical workflow, Bridge Global can help assess the architecture, identify weak points in the data lifecycle, and align engineering decisions with security, scalability, and compliance requirements.