Mastering Healthcare Cloud Platform Development

A familiar healthcare cloud project starts like this. The leadership team wants faster releases, better analytics, remote care support, and lower infrastructure drag. Legal wants tighter controls. Security wants proof, not promises. Clinical stakeholders want workflows that won’t break when the EHR sends malformed data at 2 a.m.

Then the hard part shows up. The cloud platform isn’t just an application stack. It’s a compliance boundary, an interoperability layer, an operations model, and a funding decision all at once.

That pressure isn’t temporary. The global healthcare cloud computing market was valued at approximately $51 billion in 2024 and is projected to reach $156.4 billion by 2033 or $190.4 billion by 2035, depending on the forecast, which reflects how strongly healthcare organizations are moving toward scalable and secure platforms (IMARC Group). For teams evaluating platform options, especially beyond the largest enterprise vendors, a practical read on mid-market healthcare data platforms can help frame what “fit for purpose” looks like.

The mistake I see most often is treating healthcare cloud platform development like a standard SaaS build with extra paperwork. It isn’t. If you don’t define data ownership, integration boundaries, audit expectations, and operating controls up front, the backlog fills with rework before the first production release.

Introduction to Healthcare Cloud Platform Development

Healthcare cloud platform development is the disciplined work of building systems that can store, process, exchange, and govern sensitive health data without slowing down product delivery. That sounds straightforward until you put real constraints on it.

A startup usually needs speed, proof of value, and a path to enterprise readiness. A hospital group needs resilience, vendor alignment, and safer change management. Both need the same thing at the core. They need architecture that respects patient data and operational reality from day one.

What makes healthcare cloud projects different

Most software teams can tolerate some ambiguity early on. Healthcare teams can’t tolerate much of it around data, identity, and workflow boundaries.

Three realities shape the work:

-

Protected data changes design choices: You don’t casually centralize logs, reuse shared datasets, or allow broad developer access when PHI may flow through the stack.

-

Clinical systems weren’t built for your roadmap: EHRs, payer systems, lab feeds, imaging stores, and care management tools all impose their own formats, timing, and failure modes.

-

Regulation affects delivery mechanics: Release approvals, audit evidence, retention rules, encryption choices, and access reviews shape how teams ship.

Healthcare cloud platform development succeeds when compliance becomes an engineering input, not a post-build review step.

Where teams usually get stuck

The visible problem is often “we need a cloud platform.” The actual problem is usually one of these:

-

An aging integration layer: HL7 feeds are brittle, undocumented, or tied to one site-specific implementation.

-

A data platform with no governance: Teams can ingest data, but they can’t prove lineage, access rationale, or retention behavior.

-

An AI roadmap without operational controls: Product wants intelligent features before the platform can support validation, monitoring, and rollback.

That’s why early decisions about your healthtech software development partner and foundational cloud services matter. In healthcare, the team model and delivery discipline are part of the system design.

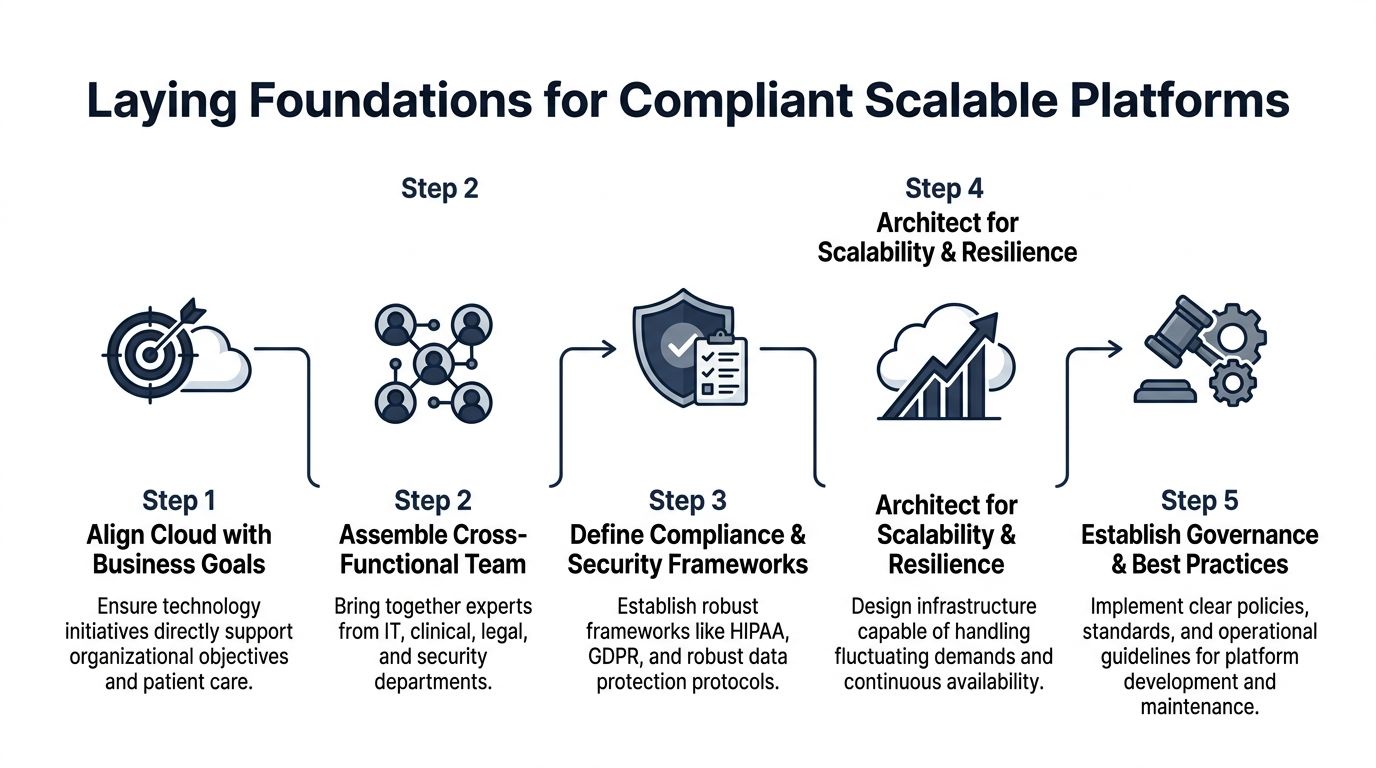

Laying Foundations for Compliant Scalable Platforms

Most failed healthcare cloud initiatives don’t fail because Kubernetes was the wrong choice or because one cloud service underperformed. They fail earlier, when nobody clearly defines who owns risk, which controls are mandatory, and what “done” means for a compliant release.

Healthcare organizations have already moved well beyond experimentation. More than 83% of healthcare organizations use cloud services, according to market research summarizing recent HIMSS survey findings (Precedence Research). The difference now is execution quality.

Start with operating intent, not architecture diagrams

Before the first sprint, pin down the business and operational intent of the platform.

Use a short working brief that answers:

-

Who is the regulated entity

-

What data types will enter the platform

-

Which workflows need write-back into systems of record

-

What uptime, auditability, and incident-response expectations exist

-

Which teams approve production changes

This sounds administrative. It isn’t. These answers determine tenant boundaries, deployment environments, key management, test data handling, and even whether a managed service is acceptable.

Build a cross-functional decision group

Healthcare cloud work gets dangerous when engineering makes compliance assumptions, and compliance makes architecture assumptions.

The core group should include:

-

Platform engineering: Owns infrastructure patterns, CI/CD, observability, and runtime controls.

-

Security and privacy: Defines guardrails, evidence requirements, and incident workflows.

-

Clinical or operational stakeholders: Validate what the platform can and can’t disrupt.

-

Integration owners: Interpret EHR, payer, lab, or device-side constraints.

-

Legal and procurement: Review BAAs, vendor terms, and cross-border implications.

For some organizations, that group exists internally. Others need a dedicated development team to extend in-house capability without fragmenting ownership.

Practical rule: If a platform decision affects PHI flow, data retention, third-party access, or write-back into a care workflow, it needs named approval ownership before implementation.

Scope compliance as a build activity

Compliance in healthcare cloud platform development isn’t a final audit milestone. It’s a series of design constraints expressed in technical form.

Map requirements into concrete controls:

| Area | Questions to answer early | Typical build impact |

|---|---|---|

| Access control | Who can see PHI, metadata, logs, and support artifacts | RBAC, least privilege, break-glass workflows |

| Data protection | Where is sensitive data stored, encrypted, and replicated | KMS strategy, tokenization, storage classes |

| Vendor accountability | Which providers handle regulated data | BAAs, DPA review, subprocessors inventory |

| Auditability | What must be provable after release | Immutable logs, change records, approval trails |

Teams that also sell into healthcare often need broader assurance beyond HIPAA. A practical primer on SOC 2 for Healthcare Companies is useful when the platform must satisfy both healthcare buyers and security-conscious enterprise customers.

If you need a more implementation-focused reference, Bridge Global's guide to HIPAA-compliant software development covers the kinds of engineering decisions that create or reduce downstream audit friction.

Two checklists that actually help

Enterprise context

-

Inventory the systems of record: EHR, imaging, payer, CRM, and warehouse platforms.

-

Define control inheritance: Know which cloud-native controls you can inherit and which you must implement.

-

Separate build and run ownership: The team that ships the platform isn't always the team that supports it.

-

Agree evidence collection early: Auditors and customers will ask for proof of process, not just policy PDFs.

Startup context

-

Limit scope hard: One care workflow and one integration path beats broad but shallow coverage.

-

Avoid premature multi-cloud: Portability matters, but operating complexity can outpace team maturity.

-

Use synthetic data aggressively in early environments: It reduces access friction and review overhead.

-

Treat compliance debt like product debt: Track it openly. Don't bury it in ops.

Organizations that get this foundation right move faster later because architecture, contracts, controls, and delivery habits stop fighting each other.

Designing Secure Cloud Architecture for Healthcare

Secure architecture in healthcare isn't about picking the most restrictive pattern. It's about placing controls where data moves, where identities fail, and where integrations break.

The technical design should follow the workflow, not vendor marketing.

Choose isolation based on risk, not habit

Single-tenant and shared infrastructure both have a place in healthcare cloud platform development. The right answer depends on data sensitivity, customer expectations, support model, and release cadence.

A practical comparison looks like this:

| Pattern | Works best when | Trade-off |

|---|---|---|

| Single-tenant deployment | Large enterprise customers require strict environment separation | Higher operational overhead and more release coordination |

| Shared control plane with isolated data plane | You need scale without exposing tenant data paths | More careful access design and stronger runtime policy enforcement |

| Hybrid architecture | Legacy systems or local processing must remain close to source systems | More integration complexity and more operational runbooks |

As we explored in our guide on healthcare security architecture, the wrong tenancy choice creates long-term friction. Teams either overbuild isolation that they can't afford to operate, or they underbuild it and end up redesigning after the first enterprise security review.

Put controls in the path of failure

A secure architecture needs controls at the points where healthcare workloads commonly go wrong.

Focus on these layers:

-

Identity and access: Short-lived credentials, strict role boundaries, and separate operational roles for support, engineering, and automation.

-

Network boundaries: Segment workloads by trust zone. Don't let internal convenience flatten the network.

-

Encryption and key management: Use managed key services where possible, but define ownership and rotation procedures before production.

-

Data lifecycle controls: Retention, archival, deletion requests, and dataset replication need explicit policy treatment.

-

Auditability: Every privileged action, deployment, and data-access path should leave usable evidence.

For teams building regulated products through custom software development, the most important architectural decision often isn't the compute layer. It's whether the platform can prove who accessed what, when, and under which approved workflow.

Why integration complexity drives architectural choices

The most expensive surprise in healthcare cloud platform development is usually EHR integration. Projects often underestimate that work by budgeting only 2 to 4 weeks and $15K, then run into Epic FHIR API limitations, HL7v2 site-specific variation, 3-month certification cycles, and sandbox-to-production mismatches that make write-back much harder than read-only integration (The Momentum).

That has direct architectural consequences.

If your platform talks to Epic, Cerner, or older HL7-based systems, design for these realities:

-

Read and write paths are different systems: Read-only APIs may appear stable while write-back requires separate review, validation, and operational fallback.

-

A middleware layer isn't optional in many environments: Tools such as Mirth Connect help normalize HL7 and FHIR differences before they spread into application code.

-

Sandboxes lie by omission: Test environments often don't expose the concurrency, auth edge cases, or payload oddities you'll hit in production.

-

Integration failures need first-class observability: You need structured logs, retry policies, dead-letter handling, and support dashboards.

Most architecture reviews spend too much time on compute topology and not enough time on integration failure behavior.

Architecture patterns that hold up under scrutiny

When teams ask what works, the answer is usually a blend rather than a pure pattern.

Microservices for bounded healthcare domains

Microservices work well when domains are separate. Scheduling, consent, provider directory, document exchange, and patient engagement often benefit from independent services.

They fail when teams split too early and move business logic into a maze of APIs nobody owns.

Serverless for narrow event-driven tasks

Serverless is strong for ingestion triggers, document processing, notifications, and controlled transformation jobs. It becomes harder to manage when long-running orchestration, complex state, or vendor callback variability dominate the workflow.

Managed services where evidence is acceptable

Managed databases, secret stores, queues, and monitoring services usually reduces operational risk if your compliance team accepts the control model and evidence trail. That's often a better trade than self-managing critical components just for perceived control.

The best healthcare platforms aren't architected to look modern. They're architected, so support teams can explain failures, security teams can validate controls, and product teams can keep shipping safely through cloud services.

Building Interoperability with FHIR and Legacy Systems

Interoperability is where otherwise solid healthcare platforms start leaking time, money, and trust. The issue isn't just standards adoption. It's the gap between the standard on paper and the implementation you find in each environment.

In multi-vendor healthcare cloud platform development, interoperability projects can fail at rates of 70% to 80% because of mismatched APIs and standards across legacy EHRs and cloud systems (Torry Harris).

FHIR works best when you narrow the contract

FHIR is valuable. It isn't magic.

Teams get traction when they define a constrained implementation profile for their use case instead of assuming broad compatibility. For example, a referral workflow may only need patient, practitioner, encounter, allergy, and observation resources. That's a much safer starting point than opening the full resource surface area.

A practical workflow usually looks like this:

-

Define the minimum resource set

-

Choose profiles that your target systems support

-

Separate read APIs from write-back operations

-

Create validation rules for every inbound and outbound payload

-

Log all transformation exceptions with payload context

The importance of that last step is often underestimated. If support can't see why a transformation failed, engineers end up reproducing clinical edge cases manually.

Legacy adaptation needs its own layer

HL7v2, older custom APIs, flat-file exports, and site-specific mappings don't belong inside your product domain logic.

Use a dedicated normalization layer for:

-

Message translation: Convert HL7v2 or custom payloads into stable internal models.

-

Terminology alignment: Resolve local code variations before downstream services consume data.

-

Retry handling: Keep transport and transformation retries out of product services.

-

Operational visibility: Expose queues, failed messages, and reconciliation status to support teams.

Mirth Connect is often the pragmatic choice when teams need flexible translation across HL7 and FHIR patterns. The main design principle is more important than the tool. Normalize once. Reuse everywhere.

If every application service contains its own EHR mapping rules, interoperability debt spreads faster than feature delivery.

SMART on FHIR is only part of the answer

SMART on FHIR helps with delegated access and app launch context. It doesn't remove the need for environment-specific testing, consent-aware data handling, or provider workflow validation.

Watch for these recurring issues:

-

Token behavior differs across environments

-

Scopes that work for read access don't guarantee write-back

-

Launch context assumptions break when embedded inside provider workflows

-

Resource completeness varies more than teams expect

That variability is why a staged rollout matters. Start with read-only use cases, validate resource quality, then move to transaction paths that can affect care operations.

A practical implementation sequence

| Stage | Focus | What to prove |

|---|---|---|

| Stage 1 | Read-only patient context | Auth flow, resource retrieval, payload consistency |

| Stage 2 | Normalization and mapping | Stable internal model across source systems |

| Stage 3 | Workflow transactions | Error handling, approval paths, rollback behavior |

| Stage 4 | Production hardening | Monitoring, reconciliation, operational support |

For teams designing APIs, adapters, and long-lived integration layers, product engineering services matter because interoperability is as much a product discipline as interface coding. A useful companion read is this guide on healthcare interoperability best practices, especially when you're planning how standards and legacy constraints meet in one platform.

Integrating AI, ML, DevOps, Testing, and Monitoring

Adding AI to a healthcare platform doesn't start with a model. It starts with data contracts, release discipline, and operational safeguards.

That's why the strongest AI-enabled healthcare cloud platform development programs treat ML as part of the software supply chain. Data ingestion, feature generation, model training, validation, deployment, and monitoring all need the same rigor as the application code around them.

Build the pipeline around traceability

A workable architecture usually has these layers:

-

Ingestion layer: Pulls from EHR, claims, device, scheduling, or patient engagement systems.

-

Curated data layer: Produces governed datasets with stable schemas and access controls.

-

Feature preparation layer: Creates reusable signals for risk scoring, prioritization, or anomaly detection.

-

Model pipeline: Handles training, evaluation, approval, packaging, and deployment.

-

Serving and monitoring layer: Delivers predictions, logs inputs and outputs, and tracks model behavior over time.

The common failure is skipping the curated middle. Teams feed inconsistent source data directly into experiments, get a promising prototype, and then can't reproduce or govern it.

What DevOps changes in a regulated AI context

Standard CI/CD isn't enough when models influence care operations, scheduling decisions, utilization workflows, or patient communications.

You need release gates that cover more than code quality:

Application delivery controls

-

Environment separation: Keep dev, staging, and production credentials, data access, and deployment rights strictly separated.

-

Policy checks in pipelines: Validate infrastructure policy, secret usage, and dependency hygiene before deployment.

-

Rollback readiness: Every release should support quick reversal without corrupting downstream state.

ML-specific controls

-

Version everything: Training data snapshot, feature logic, model artifact, prompt or inference configuration, and evaluation record.

-

Test beyond accuracy: Check for missing-field behavior, drift sensitivity, and failure handling when upstream data changes.

-

Review business impact: Clinical, compliance, and product owners should all understand where the model influences decisions.

A good healthcare AI workflow isn't flashy. It's inspectable.

A concrete delivery pattern that works

Suppose a care management team wants to prioritize patients for follow-up. The wrong move is to build a model straight from raw exports and expose scores in production dashboards.

A safer pattern is:

-

Ingest scheduling, visit, and observation data into a governed lake.

-

Create validated feature sets with named ownership.

-

Train a model in a controlled environment.

-

Publish scores to an internal review queue first.

-

Monitor false positives, missing context, and operational usability.

-

Promote into workflow only after support and governance teams are ready.

That same pattern applies to document triage, anomaly detection, and conversational assistance.

For teams exploring patient-facing or staff-facing assistants, this article on AI chatbot use in healthcare is useful because conversational systems introduce many of the same governance and monitoring concerns.

Monitoring needs two lenses

Traditional platform monitoring and AI monitoring serve different purposes. You need both.

| Monitoring type | Questions it answers | Typical signals |

|---|---|---|

| Platform monitoring | Is the system available and responsive | Latency, queue depth, error rate, service health |

| Data monitoring | Is source data arriving correctly and completely | Schema changes, null spikes, delayed feeds |

| Model monitoring | Is the model behaving as expected | Drift, confidence patterns, output distribution |

| Operational monitoring | Are users acting on outputs safely | Escalations, overrides, support tickets |

A model can be statistically stable and still fail operationally if staff don't trust the output or can't trace how it was produced.

Team structure matters more than tool choice

I've seen teams spend months comparing feature stores, orchestrators, and vector stacks while leaving ownership unclear. In practice, AI-enabled healthcare platforms move faster when four roles are explicit:

-

Data engineering owns dataset reliability

-

ML engineering owns training and deployment mechanics

-

Application engineering owns workflow integration

-

Governance stakeholders own approval boundaries

When those lines blur, incidents turn into meetings. When they're clear, teams can use AI development services, an AI transformation framework, and broader digital transformation consulting in a focused way, tied to actual operating constraints instead of innovation theater.

The same goes for broader AI for your business. In healthcare, the question isn't whether AI can be added. It's whether the platform can support it responsibly.

Managing Migration Cost Optimization and Governance

Most healthcare migrations don't fail because the destination cloud is a poor fit. They fail because the migration plan ignores governance, dependency sequencing, and runtime accountability.

In healthcare platforms, cloud migration fails 35% to 50% of the time without strategic planning and governance. Weak roadmaps also lead to 20% to 40% budget overruns, and compliance breaches occur in 25% of cases in the same context (Accountable HQ).

That isn't a reason to delay migration. It's a reason to stop treating migration as a technical move alone.

The right migration goal is control, not lift and shift

A clean migration plan starts by classifying workloads and deciding what each one deserves. Some should move quickly. Some should be refactored. Some should stay put until the surrounding controls are ready.

Three decisions shape cost and risk more than anything else:

-

What moves first

-

Which workloads keep their current shape

-

Where governance is enforced automatically

If you migrate your most entangled transactional workload first, you increase project risk immediately. If you start with analytics, batch reporting, or contained services, the team learns the operating model with less blast radius.

A practical governance playbook

Landing zone before migration wave

Build the cloud landing zone first. That includes identity boundaries, network segmentation, key management, tagging rules, budget visibility, logging strategy, and incident routing.

Without that baseline, each migrated workload invents its own control surface.

FinOps needs engineering input

Cost optimization in healthcare cloud platform development doesn't come from one dashboard review at month-end. It comes from the architectural discipline:

-

Choose storage classes deliberately

-

Shut down idle non-production environments when policy allows

-

Right-size compute after real workload observation

-

Separate bursty jobs from always-on services

-

Tag everything that carries operational or compliance significance

Policy as code beats manual review

Manual checks fail under delivery pressure. Policy as code doesn't solve everything, but it consistently catches mistakes before production.

Good candidates include:

-

Encryption requirements

-

Public exposure restrictions

-

Approved regions

-

Logging and retention rules

-

Mandatory tagging

-

Identity and role constraints

A strong cyber compliance solutions capability can help turn those controls into repeatable build-time and deploy-time checks instead of one-off review comments.

Governance should reduce decision fatigue. If engineers have to re-interpret core security and compliance rules every sprint, the platform isn't governed yet.

Enterprise and startup migration strategies differ

Enterprise organizations

Enterprises usually need phased migration with parallel runs, tighter approval workflows, and broader dependency mapping. The cost of disruption is high, but they often have the scale to invest in stronger platform guardrails from the start.

A dedicated development team can help when internal platform, integration, and security teams are overloaded, but governance still needs central control.

Startups and scale-ups

Startups need to avoid over-architecting. Their biggest risk is building a migration target that's too expensive to operate before product-market fit or enterprise traction is clear.

Good startup migration discipline usually means:

-

One cloud provider first

-

Minimal but real compliance controls

-

Sharp environment boundaries

-

Measured refactoring only where it enables delivery or trust

Cost Savings by Migration Strategy

| Strategy | Cost Reduction | Migration Time |

|---|---|---|

| Rehost | Qualitative savings possible, but often limited without follow-up optimization | Typically faster than deeper redesign |

| Replatform | Better balance of operational improvement and migration speed | Moderate |

| Refactor | Can unlock the strongest long-term efficiency and governance benefits | Slower upfront |

| Hybrid retention | Useful when some systems shouldn’t move yet | Depends on dependency complexity |

That table is deliberately qualitative because real outcomes depend on workload shape, vendor contracts, data movement patterns, and compliance obligations. Teams get into trouble when they assume the migration strategy itself guarantees savings.

A good companion read on control expectations is Bridge Global’s guide to SOC 2 compliance requirements. Even when your primary driver is HIPAA or GDPR, broader control discipline often improves migration quality.

Conclusion and Next Steps with Bridge Global

Healthcare cloud platform development goes off course in predictable ways. Teams under-scope compliance. They over-trust standards compatibility. They delay governance until after the first release. They treat AI as a feature layer instead of an operational system.

The teams that do well make a different set of choices. They define regulated boundaries early. They isolate integration complexity instead of letting it spread. They design architecture around supportability and evidence. They treat migration, monitoring, and model operations as part of one platform discipline.

That approach works for both enterprises and startups. The difference is scope, not principle.

If you’re evaluating your next move, start with three questions:

-

Which workflows justify a cloud platform now

-

Where will compliance and interoperability create delivery drag

-

What operating model can your team realistically support over time

Those answers usually reveal whether you need an MVP path, a modernization roadmap, or a phased platform rebuild.

FAQ

What is healthcare cloud platform development?

Healthcare cloud platform development is the design and implementation of cloud-based systems that handle healthcare data, workflows, integrations, and analytics under strict security and compliance requirements. It usually includes identity controls, auditability, interoperability, monitoring, and operational governance.

Why do healthcare cloud projects get delayed?

The most common source of delay is integration. EHR connectivity, certification cycles, sandbox-to-production differences, and write-back complexity often take more time than teams expect.

Should healthcare organizations choose single-tenant or multi-tenant architecture?

It depends on risk profile, customer requirements, support model, and cost tolerance. Single-tenant environments can simplify certain enterprise conversations, while shared platforms with strong isolation can offer better scalability and operational efficiency.

Is FHIR enough to guarantee interoperability?

No. FHIR helps standardize exchange, but implementation differences, profile mismatches, data quality issues, and legacy dependencies still create significant integration work.

How should startups approach healthcare cloud platform development?

Startups should keep scope narrow, define a clear compliance boundary, avoid unnecessary platform complexity, and prove one workflow end-to-end before expanding.

Where does AI fit in a healthcare cloud platform?

AI fits best after data governance, validation, deployment controls, and monitoring are in place. Without those foundations, models are hard to trust, support, and audit.

Conclusion and Next Steps with Bridge Global

If your team is planning a healthcare platform build, modernization effort, or regulated AI rollout, the fastest path usually isn’t more tooling. It’s better framing, tighter architecture decisions, and delivery discipline that account for healthcare’s real constraints. A strong healthtech software development partner can help you move from uncertainty to an executable roadmap, especially when cloud, interoperability, AI, and compliance all need to advance together. Bridge Global also offers AI for your business for teams shaping practical AI use cases, and its client cases show how technology programs translate into real delivery outcomes across industries. If you need support across regulated product delivery, custom healthcare software development, broader product engineering services, or end-to-end platform execution, we can help turn strategy into a working system.

Bridge Global helps healthcare organizations, product teams, and founders build secure, scalable digital platforms with practical delivery discipline. If you’re looking for a partner to shape architecture, accelerate compliant engineering, and operationalize AI without adding unnecessary complexity, explore Bridge Global.