AI Solutions in Insurance: A 2026 Explainer Guide

The insurance industry doesn’t need another abstract conversation about AI. It needs execution.

The urgency is clear. The global AI in insurance market is valued at $18.64 billion in 2025 and is projected to reach $303.31 billion by 2035, with a 32.3% CAGR during 2026 to 2035 according to InsightAce Analytic’s AI in insurance market report. That kind of investment signals a change in operating model, not a passing technology cycle.

For mid-market insurers, the question isn’t whether AI matters. It’s whether your organization can move from scattered pilots to reliable, governed, production-grade systems that improve underwriting, claims, fraud controls, and customer experience without creating new compliance risk.

That’s where many programs stall. Use cases are easy to list. Building AI into day-to-day insurance operations is harder. This guide focuses on that harder part, with a practical view of what works, what usually fails, and how an AI solutions partner can help insurers move from legacy workflows to scalable intelligent operations.

The AI Imperative in Modern Insurance

Only a small share of insurers have scaled AI across the enterprise, while investment intent keeps rising. That gap is the main challenge for mid-market carriers. The opportunity is no longer proving that AI can work. It is building the operating discipline to make it work inside underwriting, claims, service, and fraud programs.

AI is now part of core insurance execution. Underwriters need better risk signals from fragmented data. Claims leaders need faster triage and more consistent decisions. Service teams need to handle higher volumes without matching every increase with new headcount. Customers, meanwhile, compare your response times with digital experiences they get from banks and retailers, not with the insurer down the street.

Why timing matters now

The pressure is coming from two sides at once. Risk is changing faster, and operating costs are not getting easier to absorb. Carriers that still rely on manual handoffs, static rules, and disconnected systems will find it harder to protect margins.

As noted earlier, industry research shows a wide gap between AI interest and enterprise-scale deployment in insurance. That matters because it points to a practical reality. Insurance executives generally understand where AI can help, but production adoption stalls when teams hit data quality problems, legacy system constraints, model governance requirements, and unclear ownership between business and IT.

I see the same pattern in mid-market programs. The issue is rarely a shortage of use cases. The issue is getting one priority workflow into production with measurable value, then creating the MLOps, controls, and delivery model to repeat that success across the policy lifecycle.

What separates progress from pilot theater

The insurers that get value from AI solutions usually make a few disciplined choices early.

They start with a workflow that already has economic friction. Examples include submission intake, document classification, first notice of loss, reserve support, and fraud scoring. A focused starting point makes ROI easier to prove.

They fit AI into the current estate instead of waiting for a full platform overhaul. Policy administration, claims, billing, and CRM systems remain the system of record. AI has to connect to them cleanly through APIs, rules layers, and governed data pipelines.

They treat MLOps as part of delivery, not as cleanup later. That includes versioning, monitoring drift, setting confidence thresholds, logging model decisions, and defining where human review is required.

They choose vendors with insurance operations in mind. Model accuracy matters, but so do audit trails, security controls, implementation effort, and the vendor’s ability to support integration with existing workflows. In fraud programs, for example, teams often review approaches such as AI fraud detection for insurance alongside their internal SIU processes and claims systems before selecting a path.

A practical rule applies here. If an AI initiative cannot stand up to policy system constraints, claims workflow exceptions, and compliance review, it is still an experiment.

Mid-market insurers have less room for long transformation cycles. They need early wins, but they also need a foundation that will hold up under audit and scale. That is why implementation sequencing matters as much as model choice. At Bridge Global, we typically advise clients to map the full lifecycle first, identify one or two high-friction processes, and build from there with clear governance, integration boundaries, and success metrics.

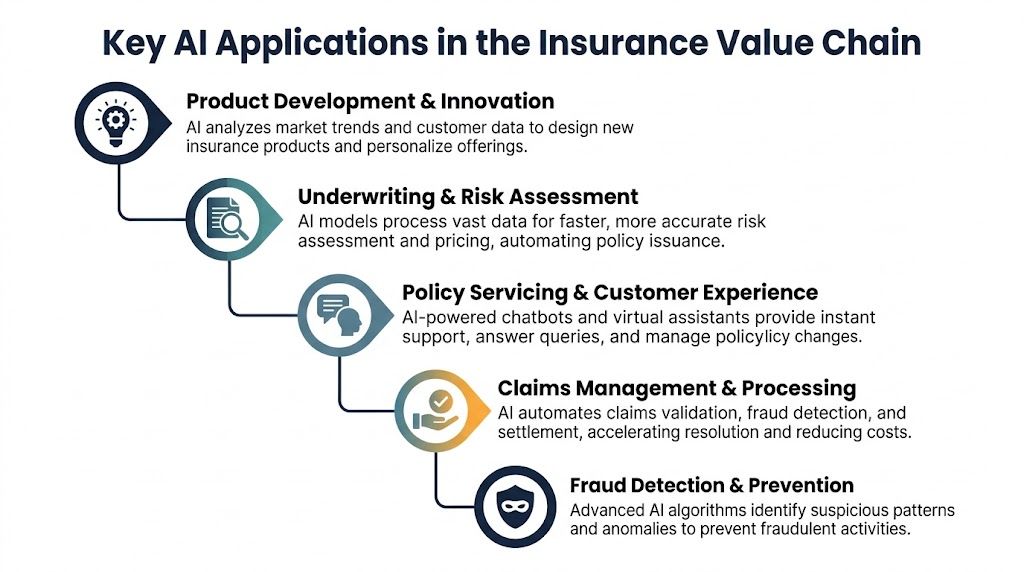

Key AI Applications in the Insurance Value Chain

Insurers that apply AI well usually start with one expensive constraint. Quote turnaround is too slow. Claims handling varies by adjuster. Fraud review arrives too late to prevent leakage. The strongest programs target those pressure points first, then extend across the value chain with clear workflow ownership, model oversight, and integration into core systems.

The value chain view matters because AI affects more than one function at a time. A better submission intake process changes underwriting capacity. Better claims triage changes indemnity control and service levels. Better fraud detection improves SIU productivity and lowers unnecessary manual review.

Underwriting and risk assessment

Underwriting is often the best starting point for mid-market insurers because the impact shows up in both speed and loss performance.

AI-based underwriting tools can process broker submissions, supporting documents, prior claims history, IoT data, and other external signals faster than a manual intake team. Databricks reports that insurers using AI in underwriting have reduced processing times by 70%, and its analysis also points to 36% efficiency gains and 3 percentage point loss ratio reductions in workflows that improve submission interpretation, eligibility assessment, and capacity optimization, as described in the Databricks analysis of AI in insurance.

In practice, the useful applications are specific:

Submission intake and extraction from emails, PDFs, ACORD forms, and attachments

Risk triage that prioritizes straightforward business for straight-through processing and sends borderline cases to underwriters

Decision support for pricing and eligibility when rules, historical outcomes, and confidence thresholds are well defined

The trade-off is clear. If source data is inconsistent or referral logic is poorly documented, model output becomes hard to trust. That is why experienced insurers automate the first pass, not the entire judgment process.

Policy servicing and customer experience

Service automation pays off when it removes avoidable work from contact centers and service teams while still giving policyholders a clear answer.

According to Vonage’s overview of AI in insurance, 81% of insurance companies identified customer satisfaction and retention as their top goal for AI adoption. That aligns with what I see in insurance programs that succeed. Faster service matters, but clarity matters just as much.

Useful service applications include policy detail lookups, billing explanations, document retrieval, address changes, FNOL status updates, and renewal reminders shaped by churn risk signals. The strongest implementations connect conversational interfaces to actual system actions. They do not stop at answering questions.

It also helps to compare AI-driven service models with adjacent operating approaches such as BPO in Insurance. That comparison is practical for executives deciding which tasks should be automated, which should be agent-assisted, and which should stay with licensed or experienced staff because of compliance, complexity, or customer sensitivity.

Claims automation and fraud detection

Claims is usually where insurers see operational friction most clearly. Cycle time stretches. Notes are inconsistent. Supporting documents arrive in mixed formats. High-value adjuster time gets consumed by administrative work.

AI can improve claims handling by classifying incoming documents, summarizing file history, detecting missing information, routing claims by complexity, and recommending next steps for low-risk cases. Those capabilities work best in a governed workflow where exceptions are explicit and human escalation is built in.

Fraud detection requires a separate operating model. The objective is not to flag everything unusual. It is to improve investigator yield and reduce false positives. For teams going deeper into fraud-specific patterns and implementation considerations, this overview of AI fraud detection for insurance is a useful complementary read.

Strong fraud programs connect model signals to SIU workflows, claim history, network analysis, and investigator feedback. Without that loop, detection quality degrades and trust falls quickly.

Product design and embedded insurance

Product and distribution teams also benefit from AI, especially when the goal is to respond faster to niche demand or support embedded offerings through partners.

Insurers use analytics to identify under-served segments, refine pricing assumptions, test coverage combinations, and support offer decisions at the point of sale. For mid-market carriers, this can open new distribution paths without requiring a full core replacement. It does, however, require disciplined execution.

Off-the-shelf tools rarely fit product logic, workflow rules, and legacy integration needs on their own. In many cases, insurers need custom software to connect rating logic, partner APIs, policy administration rules, and internal approval workflows before new products can launch reliably. That is typically where implementation partners such as Bridge Global add value, not by selling a generic model, but by helping insurers connect use cases across the full lifecycle and put them into production in a way operations teams can effectively run.

The Tangible Business Benefits and ROI of AI

McKinsey has estimated that AI could generate substantial annual value across the global insurance sector. For a mid-market insurer, that value shows up less as a headline number and more in a set of measurable operating improvements across underwriting, claims, service, and fraud control.

Insurance executives usually ask the right question first. Where does the return come from?

It comes from better throughput, better decisions, and better retention. The strongest business cases are tied to one workflow at a time, with a clear baseline for cost, cycle time, leakage, or conversion before any model is deployed.

ROI starts with unit economics, not AI ambition

The insurers that get results do not approve AI because it sounds strategic. They approve it because a specific process is expensive, slow, inconsistent, or hard to scale.

A claims intake team handling thousands of emailed documents each month can reduce manual sorting and indexing. An underwriting operation can shorten turnaround time by extracting submission data before an underwriter reviews the file. A service team can handle more policyholder requests without adding the same percentage of headcount. Each of those improvements affects a line item that finance teams already track.

That is why ROI discussions should start with unit economics. Cost per claim handled. Time to quote. Referral rate. Rework volume. Leakage identified before payment. Retention at renewal.

Where benefits usually appear first

Early returns tend to come from workflows with high volume, repeatable patterns, and clear handoffs between systems and people.

| Benefit area | How AI creates value | Typical business effect |

|---|---|---|

| Operations | Classifies documents, extracts fields, summarizes files, routes work | Lower handling cost and shorter cycle times |

| Underwriting quality | Highlights missing data, flags anomalies, supports triage | Faster decisions and better underwriter productivity |

| Claims control | Prioritizes files, detects irregular patterns, supports adjuster review | Lower leakage and more consistent outcomes |

| Service capacity | Automates common requests and drafts responses for review | Better response times and improved retention |

| Governance | Standardizes decision support and records model outputs | Cleaner audit trails and fewer process exceptions |

Some returns are visible within a quarter. Document ingestion, contact center assistance, and claims triage often produce early savings because they remove repetitive manual effort.

Others take longer and matter more. Better loss ratio performance, stronger renewal retention, and improved broker responsiveness depend on adoption, model monitoring, and process redesign. That is the part many articles skip. A pilot can prove technical feasibility. Sustained ROI depends on MLOps, exception handling, retraining discipline, and business ownership.

Financial impact is only real if operations can absorb it

I have seen insurers overstate returns by counting every automated task as labor savings. That is rarely how the economics work. If the business cannot redeploy staff, reduce outsourced volume, increase throughput, or improve conversion, the savings stay theoretical.

A more credible model separates value into four buckets:

Hard savings from reduced manual handling, third-party spend, or avoidable leakage

Capacity gains from handling more submissions, claims, or service requests with the same team

Risk improvement from better triage, pricing discipline, or fraud identification

Revenue protection from improved retention, broker service, and customer experience

This is also where AI and operating model choices intersect. For insurers reviewing service transformation options, adjacent delivery models such as BPO in Insurance help clarify which tasks should remain human-led, which should be AI-assisted, and which can be automated with strong controls.

ROI depends on implementation discipline

Buying a model does not create returns. Production deployment does.

For mid-market insurers, the practical trade-offs are usually clear. A faster time to value may come from a vendor platform, but that can limit flexibility if workflows are highly specialized. A custom build can fit underwriting rules, claims logic, and integration needs better, but it takes stronger product ownership and MLOps maturity to maintain. The right decision depends on process complexity, data quality, regulatory exposure, and the cost of being locked into a black-box workflow.

Three rules consistently hold up:

Start with a workflow that already has economic pain. High volume and measurable friction make value easier to prove.

Design for human review from day one. Referral queues, confidence thresholds, and override paths protect service quality and compliance.

Measure end-to-end outcomes. A model that speeds one step but creates downstream rework has not improved the business.

The insurers that see durable returns usually combine process redesign, governance, and technology integration in one program. That is why AI investments tend to perform better when they are treated as part of a broader modernization effort rather than as isolated experiments.

Your Implementation Roadmap From Discovery to Deployment

Most failed insurance AI projects don’t fail because the model is weak. They fail because the organization skips design discipline.

A workable roadmap has to move from business problem to production system in stages. That’s especially important for mid-market insurers, where budgets, data maturity, and internal AI capacity are often uneven across teams.

Phase one with discovery and use case selection

The first phase is about choosing the right problem, not choosing the most advanced model.

A solid discovery process should answer four questions:

Where is the operational bottleneck?

What data exists, and is it usable?

What decision can the system support or automate safely?

How will success be measured in business terms?

For insurers, the strongest early use cases often include underwriting intake, claims document classification, fraud triage, and service request automation.

This is also the point where architecture reality needs to come into the room. If your data is trapped in policy administration systems, shared drives, email inboxes, and claims platforms with inconsistent identifiers, the program is really a data-and-workflow project before it becomes an AI project.

Field note: If stakeholders can’t agree on what “good underwriting turnaround” or “claims ready for review” means, don’t start training models yet.

Phase two with pilot and proof of value

A pilot should be narrow, instrumented, and designed to answer operational questions.

That means choosing one workflow, one user group, and a limited set of inputs. For example, a pilot might focus on extracting broker submission data from incoming documents and routing cases by confidence level to underwriters.

A credible pilot includes:

Baseline mapping of current handling time, error points, and manual touchpoints

Controlled deployment with selected users rather than enterprise-wide release

Review loops so underwriters, adjusters, or service agents can flag model errors quickly

Governance checks for data handling, access control, and explainability

Teams should also decide whether they need classic machine learning, generative AI, rule-based orchestration, or a combination.

Phase three with production integration

Moving from pilot to production is usually where complexity spikes.

The work shifts from model behavior to system behavior. Can the AI service authenticate into the right systems? Can it write back structured outputs? Can it trigger workflow actions safely? Can supervisors audit decisions and corrections?

A production rollout usually requires:

API integration into policy, claims, CRM, and document systems

Identity and access controls aligned with internal security standards

Exception routing for low-confidence outputs or regulatory edge cases

Audit trails that show source inputs, prompts or rules, model outputs, and human overrides

Many insurers need external implementation support. One option is using AI development services to build the integration layer, model operations, and workflow logic around the use case. Bridge Global, for example, provides AI Discovery Workshops and implementation support that connect data engineering, product design, and full-stack delivery for organizations that need a structured path from pilot to production.

Phase four with MLOps and continuous improvement

Insurance AI isn’t a launch-and-leave system.

Models drift. Claims patterns shift. Fraud behavior changes. Underwriting guidelines evolve. A useful production setup needs MLOps disciplines so the system remains accurate, governed, and operationally trusted.

Here’s what mature MLOps looks like in insurance:

| MLOps area | What to manage | Why it matters |

|---|---|---|

| Monitoring | Track prediction quality, exception rates, and workflow outcomes | Detects degradation before users lose trust |

| Versioning | Control model, prompt, and feature changes | Supports rollback and auditability |

| Feedback loops | Capture corrections from underwriters, adjusters, and analysts | Improves relevance in production |

| Governance | Review bias, explainability, and policy compliance | Reduces regulatory and operational risk |

As we explored in our guide to AI for your business, the highest-value AI programs are the ones built into operating workflows, not the ones left as stand-alone experiments.

Measuring Success with the Right AI KPIs

Insurers that see real returns from AI do not measure model quality alone. They track whether AI improves underwriting decisions, speeds claims handling, lowers operating cost, and holds up under real production conditions.

For mid-market insurers, that discipline matters even more. Budget is tighter, teams are leaner, and a pilot that looks promising in a demo can still fail to produce value at scale. The KPI framework has to cover the full operating lifecycle, from user adoption and workflow stability to financial impact and governance.

Leading indicators to watch early

Early metrics show whether the implementation is becoming usable and dependable in day-to-day operations. These are the signals I watch first during rollout:

Adoption by user group. Are underwriters, adjusters, or service teams using the system consistently in live work?

Exception rate. How often does AI output require escalation, override, or manual correction?

Cycle-time movement. Is work reaching the next decision point faster than the old process allowed?

Data completeness. Are incoming forms, documents, and records structured well enough to support reliable automation?

Weak leading indicators usually point to a delivery issue, not a model issue alone. The workflow may be poorly integrated, confidence thresholds may be set incorrectly, or upstream data may still be too inconsistent for production use.

Lagging indicators that matter to executives

Executive teams need a narrower set of outcome metrics tied to margin, service, and risk.

The right lagging indicators depend on the use case, but they often include:

Loss ratio movement for underwriting and risk selection programs

Claims leakage reduction where fraud detection or review quality improves

Retention and service quality where customer-facing automation reduces friction

Cost-to-serve changes across policy servicing, claims operations, and contact center work

These measures take longer to mature, which is why they should be paired with leading indicators from the start. Otherwise, teams end up celebrating a technically accurate model that never improves the operating result.

Good KPI design keeps attention on business performance, not just model performance.

Key performance indicators for AI in Insurance

| KPI Category | Example KPIs | Business Impact |

|---|---|---|

| Operational | Claims processing time, underwriting turnaround time, exception rate, straight-through processing rate | Shows whether AI is reducing manual delay and process friction |

| Financial | Loss ratio improvement, claims leakage reduction, cost per claim, cost to serve | Connects AI to profitability and operating efficiency |

| Customer | Retention trend, service response quality, complaint volume, policyholder satisfaction signals | Measures whether AI improves the customer relationship |

One practical point often gets missed. KPI design should include control, audit, and security signals alongside business metrics, especially when AI touches claims files, payment workflows, or sensitive policyholder data. Teams already managing regulated data environments can apply similar thinking from a PCI DSS compliance checklist for secure operational controls when defining access, logging, and oversight requirements around AI-enabled processes.

The strongest KPI frameworks do three jobs at once. They tell leadership whether AI is paying off, they show delivery teams where the workflow is breaking down, and they create a fact base for deciding what to expand, retrain, or stop. That is how insurers turn AI from an isolated experiment into a managed business capability.

Navigating Common Pitfalls and Compliance Hurdles

Many insurance AI initiatives stall for reasons that have little to do with model quality. The problems usually show up in data handling, workflow design, controls, and operating ownership.

Mid-market insurers feel that pressure early. They are often upgrading core systems while trying to introduce AI into underwriting, claims, servicing, or fraud operations. That creates delivery risk fast because the same teams are being asked to clean data, integrate legacy platforms, define governance, and support business adoption at the same time.

The pitfalls that show up early

The first set of problems is usually operational, not theoretical.

Weak source data. Policy, claims, billing, and document systems often hold conflicting records, missing fields, and inconsistent identifiers.

Poor process definition. Teams try to automate work that still depends on individual judgment, undocumented exceptions, or local workarounds.

No exception strategy. Low-confidence outputs, disputed records, and unusual cases get pushed back and forth between teams with no clear escalation path.

Misaligned ownership. IT owns the platform. Operations owns the workflow. Compliance owns the risk. Nobody owns the full outcome.

These issues drive adoption problems. If adjusters, underwriters, or service teams cannot see why the system produced an output, or they have no safe way to override it, they stop using it or work around it.

I have seen projects with a sound model and a weak operating design fail in pilot. I have also seen simpler models deliver value because the insurer defined handoffs, approval rules, and exception queues before rollout.

Compliance has to be built into delivery

Insurance AI needs governance from day one.

That includes privacy controls, role-based access, explainability for regulated decisions, retention rules, audit logs, model monitoring, and clear records of human review. Compliance teams should not be asked to approve a finished system after design and development are already complete. They need visibility into training data, feature selection, workflow impacts, override rules, and output presentation while the solution is being built.

Explainability deserves special attention. If AI influences pricing, eligibility, claims triage, fraud scoring, or customer communications, the insurer needs a defensible record of how the output was generated, what data was used, what confidence thresholds were applied, and when a person stepped in.

Compliance-ready AI is usually the result of disciplined data controls, traceable decisioning, and explicit accountability.

For teams defining those controls, a PCI DSS compliance checklist for secure access, logging, and oversight is a useful reference point. The regulatory context is different, but the operating discipline is similar.

What to look for in a technology partner

Partner selection should be based on delivery capability across the full lifecycle, not just model-building credentials.

A suitable partner should be able to assess data readiness, integrate with policy and claims platforms, design approval and exception workflows, set up MLOps for monitoring and retraining, and document governance in a way that stands up to internal audit and compliance review. That matters more for mid-market insurers than a polished demo, because significant work starts after the pilot.

Use practical selection criteria:

Legacy integration experience with policy administration, claims, billing, and document management systems

Governance design capability for audit trails, model versioning, access control, and human-in-the-loop review

MLOps maturity for monitoring drift, handling retraining, and managing production change without disrupting operations

Business process understanding so the solution fits underwriting and claims reality, not just a technical architecture

Change support to help business teams adopt the workflow and trust the outputs

The trade-off is straightforward. A specialist vendor may offer a strong point solution but struggle with integration and operational ownership. A broader delivery partner can usually reduce that risk, but only if they can prove they have handled regulated workflows, production support, and model governance in live insurance environments.

Your Path to an AI-Driven Insurance Future

AI can improve underwriting, claims, fraud detection, customer servicing, and product design. But value doesn’t come from the use case list. It comes from disciplined implementation.

The insurers getting traction are choosing focused workflows, integrating with core systems, building governance into the design, and treating MLOps as part of production readiness. That’s especially important for mid-market carriers that need measurable progress without taking on uncontrolled delivery risk.

The practical path is straightforward. Select the right business problem. Validate it with a pilot. Integrate it into live operations. Monitor and improve it continuously.

That’s where an experienced AI solutions partner can make the difference between another pilot and a production system your teams will use.

Frequently Asked Questions

How should mid-market insurers start with AI if budgets and compliance pressure are both high?

Start with one workflow where the cost of delay is visible and the controls are manageable. Underwriting intake, claims document classification, and service request triage are usually better starting points than fully automated decisioning because they produce measurable efficiency gains without creating unnecessary model risk on day one.

For mid-market insurers, the early constraint is rarely the model itself. It is integration effort, review overhead, and the need to show how outputs were produced. A practical starting scope includes human review, clear audit logs, exception handling, and a rollback path if model performance drops. That approach gives executives a cleaner business case and gives compliance teams fewer reasons to slow the project down.

What’s the difference between machine learning and generative AI in insurance?

Machine learning fits prediction problems. It helps with risk scoring, fraud detection, propensity modeling, and claims triage where the goal is to classify, rank, or forecast an outcome from historical patterns.

Generative AI fits language-heavy work. It can summarize adjuster notes, extract information from submissions, draft responses for service teams, and help staff search policy and claims content faster.

In production, many insurers need both. A claims workflow might use generative AI to read incoming documents and machine learning to score severity or fraud risk. That combination is often more useful than treating generative AI as a standalone strategy.

How long does an insurance AI project usually take?

Timelines depend on three things more than anything else. Data readiness, integration with core systems, and the speed of internal approval.

A focused pilot can move in weeks if the use case is narrow and the data is already accessible. Production deployment takes longer because security, identity, monitoring, user acceptance testing, and compliance review have to be built into the delivery plan. In my experience, insurers that set a 90-day target for pilot validation and a phased production plan after that make better decisions than teams that promise enterprise-wide rollout too early.

What should insurers look for in an AI vendor or implementation partner?

Use four filters. Insurance domain knowledge, integration capability, MLOps discipline, and governance maturity.

A credible partner should be able to explain how models will be monitored, retrained, versioned, and audited after go-live, not just how the demo works. They should also be clear about build-versus-buy trade-offs, where off-the-shelf tools fit, and where custom development is justified. For mid-market insurers, that matters because long-term operating cost often matters more than the initial proof of concept.

If you’re evaluating AI initiatives in insurance, Bridge Global can help you assess use cases, validate architecture choices, and build a production-ready roadmap that balances business value with compliance and operational reality.