Choosing a Regulated Healthcare Software Development Partner

You’re likely in a familiar spot. Product pressure is rising, legal is asking hard questions, the board wants speed, and your internal team knows that shipping healthcare software without the right controls is how technical debt turns into regulatory debt.

That’s why choosing a regulated healthcare software development partner isn’t a procurement exercise. It’s a risk decision with direct impact on launch timing, audit readiness, security exposure, and long-term product economics. A vendor can write code. A partner helps you avoid rework, failed integrations, weak documentation, and ugly surprises during validation or due diligence.

The High Stakes of Choosing Your Healthtech Partner

The market is telling you exactly where this is going. The healthcare compliance software market is projected to reach USD 6,503.3 million by 2030, growing at a CAGR of 11.6%, driven by the need to reduce risks such as data breaches, which cost healthcare an average of $10.93 million per incident according to AssureSoft’s healthcare software partner analysis.

That number matters, but the operational issue is paramount. A weak partner doesn’t just expose you to security events. They slow down every important decision. Architecture gets revisited. Documentation gets rebuilt. Validation gets delayed. Integration work balloons because the team treated FHIR, audit trails, access control, and evidence collection as cleanup work instead of design inputs.

Vendor thinking creates downstream risk

A typical software vendor optimizes for delivery velocity inside a statement of work. That’s not enough in healthtech.

A real healthtech software development partner works backward from product risk. They ask different questions:

-

What’s the likely regulatory path for this product?

-

Where does protected data move, and who can access it?

-

Which controls need evidence, not just implementation?

-

What breaks if AI is introduced later, even if phase one is rules-based?

-

How will this hold up under partner security review, procurement review, or investor diligence?

If a firm jumps straight to sprint plans and velocity charts, you’ve learned something useful. They’re thinking like builders, not accountable partners.

The business case is simple

You need a partner that reduces uncertainty. That means clear documentation, disciplined change control, stronger security architecture, and a delivery model that supports your broader digital transformation consulting agenda instead of creating another isolated system.

Practical rule: If a partner can’t explain how they manage regulatory risk from discovery through support, don’t trust them with a regulated roadmap.

The right partner shortens the path to launch because they remove avoidable mistakes early. In regulated healthcare, that’s where the value is.

Decoding the Regulatory Alphabet Soup: HIPAA, GDPR, and ISO

Compliance language gets abused in vendor conversations. Everyone says they “understand HIPAA.” That means nothing. You need to know what these frameworks require in practical software terms and what evidence a partner should be able to show.

What HIPAA and GDPR mean in delivery terms

HIPAA is not a badge. It’s a set of operational obligations around safeguarding electronic protected health information. GDPR is broader, but in software delivery, it also forces discipline around data handling, access, retention, and accountability.

A capable partner should be able to map those requirements into concrete controls. According to TWS’s healthcare regulations overview, a compliant partner can reduce breach risks by 75% by implementing AES-256 encryption, Role-Based Access Control, and continuous audit logging in line with HIPAA’s Security Rule and GDPR Article 32.

That should change how you interview vendors. Don’t ask, “Are you HIPAA compliant?” Ask this instead:

-

How do you implement access control across admin, clinician, patient, and support roles?

-

What gets encrypted at rest and in transit?

-

How are audit logs structured, retained, and reviewed?

-

How do you handle least-privilege access in cloud environments?

-

What evidence can you provide from penetration testing or security review?

ISO matters because process matters

In healthcare, good intentions don’t pass audits. Repeatable process does.

ISO frameworks matter because they force a partner to operationalize quality and information security. That shows up in design reviews, validation records, defect handling, release evidence, and post-release controls. If a partner treats compliance as a final QA phase, you’re already late.

For teams evaluating regulated delivery maturity, this digital health speed compliance whitepaper is a useful reference point because it frames compliance as part of product execution, not a separate workstream.

A partner that can’t connect requirements, code, tests, risks, and approvals into a traceable chain will create friction everywhere else.

Ask for proof in context

You also need contextual expertise. Compliance in a telehealth platform, a clinician workflow tool, and a device-adjacent product doesn’t look identical. A partner should explain how they apply controls in your product category, not recite generic standards.

For example, teams migrating collaboration or document workflows into regulated environments should understand how platform choices affect governance. This resource on SharePoint compliance for healthcare is a useful example of how compliance considerations become concrete when systems, permissions, and protected data intersect.

If a partner can’t speak at that level of specificity, move on.

Evaluating a Partner’s Quality and Security DNA

A certificate on a slide deck is not a delivery capability. What matters is whether the partner’s operating model produces audit-ready output week after week.

What mature quality systems look like

When a partner has real quality discipline, you’ll see it in artifacts, not slogans. Requirements are versioned. Risks are tied to controls. Test evidence maps to acceptance criteria. Design changes aren’t buried in chat threads. Validation isn’t left to the end.

According to HTD Health’s medical device software partner guide, partners with ISO 13485 certification can reduce regulatory submission rework by 40-60% because their Quality Management System aligns with FDA and EU MDR expectations for design controls, verification, and validation.

That benefit is strategic. Rework in regulated projects isn’t just expensive. It drains leadership attention and forces engineering teams to rewrite evidence after the fact.

What to inspect during due diligence

Ask to review anonymized examples of the partner’s delivery artifacts. If they hesitate, that’s a signal.

Look for these indicators:

-

Traceability discipline: Requirements should map clearly to design inputs, implementation, test cases, and approvals.

-

Design history rigor: The team should maintain a coherent record of decisions, changes, and validation evidence.

-

Risk integration: Risk management should influence backlog priority, testing depth, and release criteria.

-

Defect governance: The partner should classify defects consistently and show how remediation is documented.

-

Independent quality checks: QA must have enough authority to block weak releases.

A separate review of software quality assurance capabilities is worth insisting on, especially if your product has clinical, diagnostic, or patient-facing implications.

Security should be boring and rigorous

Security maturity isn’t about a dramatic demo. It’s about whether secure practices are built into daily delivery.

Use this quick comparison during evaluation:

| Area | Weak partner behavior | Strong partner behavior |

|---|---|---|

| Access control | Shared credentials, broad admin access | Role-based access with clear ownership |

| Testing | Security review near launch | Security testing throughout delivery |

| Logging | Minimal logs kept “just in case” | Structured logging with review purpose |

| Documentation | Security decisions live in email | Decisions are documented and traceable |

| Incident handling | Vague escalation path | Defined ownership and response workflow |

Don't reward presentation polish. Reward process evidence.

If a partner can't show how quality and security are embedded in their SDLC, they're asking you to trust individual heroics. That's not a controlled way to build regulated software.

Assessing Future-Ready Capabilities: AI Cloud and Interoperability

Your team ships phase one with a vendor that passed the compliance review. Six months later, significant problems show up. The EHR integration is brittle, the cloud setup cannot satisfy enterprise due diligence, and the AI feature in your roadmap has no validation plan. That is a partner selection failure, not a delivery hiccup.

Future-ready capability should be assessed as risk control. In regulated healthcare, AI, cloud, and interoperability choices affect sales cycles, audit readiness, patient safety, and the cost of scaling.

Interoperability determines whether the product works in the real market

A partner should be fluent in HL7 and FHIR at the implementation level. Sales familiarity means nothing. You need a team that can map messy data, handle workflow exceptions, design authentication correctly, and work through the challenges of provider systems that were never built to cooperate cleanly.

This matters early.

If your roadmap includes referrals, scheduling, records exchange, claims workflows, remote monitoring, or care coordination, interoperability gaps will slow revenue and inflate support costs. A polished UI will not rescue a product that cannot exchange data reliably with the systems buyers already use.

Cloud architecture should hold up under scrutiny

Plenty of vendors can deploy on AWS, Azure, or GCP. The better question is whether the partner possesses the expertise to configure cloud services to support regulated workloads and evidence collection.

Ask how they handle:

-

Environment segregation

-

Access governance

-

Logging and monitoring

-

Backup and recovery

-

Release controls

-

Third-party service review

Weak cloud decisions create downstream commercial risk. Enterprise prospects will ask for architecture answers. Procurement will ask for documented controls. Legal will ask where the risk sits. If your partner cannot produce clear evidence, your team absorbs the delay.

AI maturity separates credible partners from risky ones

Many vendor evaluations break down at this stage. A firm may show a demo, wire up an LLM, or add a chatbot to a workflow. That does not prove they can build AI features that belong inside a regulated product.

For healthcare, assess AI capability through governance. Ask whether the partner can define intended use, validate model behavior before release, document data assumptions, constrain unsafe outputs, monitor drift, and decide which decisions must stay outside automated logic. If they cannot answer those questions in operational terms, they are selling experimentation while you are buying product risk.

A 2025 survey cited by CitrusBits found that healthcare executives are prioritizing AI while many still face integration failures tied to unproven vendor expertise. That pattern matches what CTOs see in selection cycles. AI ambition is high. Delivery discipline is uneven.

Use direct questions:

-

How do you validate model behavior before release?

-

How do you document training data assumptions and limitations?

-

How do you support explainability or decision traceability?

-

How do you monitor drift, false confidence, and unsafe edge cases?

-

How do you decide whether an AI feature belongs inside or outside a regulated workflow?

One practical screen is to review partners with proven AI development services and a defined AI transformation framework, especially if you plan to operationalize AI for your business beyond a single pilot.

Treat AI as a governed system with validation, monitoring, and documented limits. Any partner that treats it like a bolt-on feature is asking you to accept unmanaged risk.

Structuring the Partnership for Success and Risk Mitigation

Even a technically capable partner can become a bad choice if the engagement model is wrong. Regulated work needs room for discovery, disciplined changes, and documented decisions. Your contract and operating model should reflect that.

Choose the model that matches uncertainty

Here's the blunt view.

| Engagement model | Where it fits | Main risk |

|---|---|---|

| Fixed price | Narrow scope, stable requirements, contained updates | Encourages shortcuts when requirements evolve |

| Time and materials | Discovery-heavy work, evolving architecture, integration uncertainty | Can drift without strong governance |

| Dedicated development team | Long-term product builds with ongoing roadmap ownership | Requires active product leadership from your side |

For most regulated healthcare products, a fixed price only works for sharply bounded work. Think of contained modules, remediation efforts, or well-defined migration tasks. It’s usually the wrong model for early product development, AI-enabled workflows, or anything with uncertain integration complexity.

A dedicated model often works better because it preserves context. The same engineers stay close to your architecture, validation logic, and release history. That matters.

Put risk controls into the contract

The statement of work should do more than define deliverables. It should define control points.

Include explicit expectations for:

-

Risk management ownership, and how risks are documented, reviewed, and escalated

-

Change control for scope, requirements, architecture, and regulated features

-

Validation planning across installation, operation, and performance, where relevant

-

Release evidence, including testing, approvals, and traceability

-

Support obligations after go-live, especially for incidents and compliance-impacting defects

If those items live outside the contract, they’ll become negotiation points later. That’s exactly when you don’t want ambiguity.

Align the delivery model to product economics

Your partnership model should also support how the product evolves. If you’re building a platform, not a one-off application, think in terms of operating continuity. That’s where product engineering services and a broader custom software development approach make more sense than one-time project delivery.

The right structure reduces friction in year two, not just quarter one.

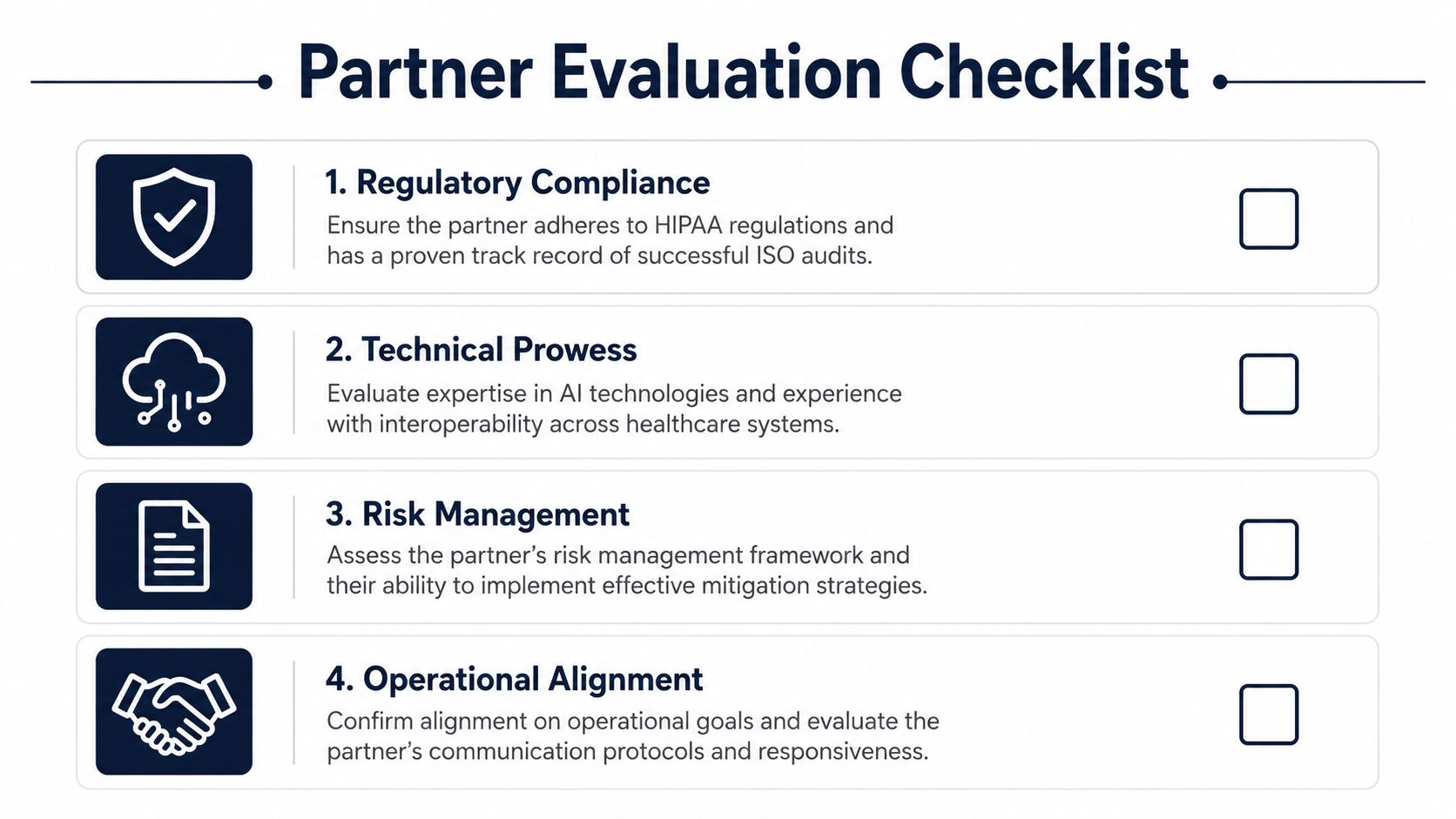

Your Partner Evaluation Checklist and RFP Questions

Most RFPs are weak because they ask about skills, rates, and timelines. That doesn’t tell you whether a partner can survive regulated complexity.

Use sharper questions.

Regulatory and compliance questions

-

Which regulations and standards have you worked under that are relevant to our product type? Ask for examples, not general claims.

-

How do you translate regulatory requirements into backlog items, architecture decisions, and test evidence?

-

Can you provide sample deliverables such as traceability records, validation documents, or audit-ready artifacts with sensitive details removed?

-

How do you handle Business Associate Agreements and data processing responsibilities?

Quality and security questions

-

Describe your QMS in practical terms. Who owns quality? How are changes reviewed? What evidence is mandatory before release?

-

How do you embed security-by-design into delivery?

-

What penetration testing, code review, and secure coding practices do you use?

-

How do you manage access to production data, lower environments, and support tooling?

Ask every finalist the same questions. Vendors often look similar until you force comparability.

Technical and AI questions

-

What is your direct experience with our required integration standards, such as HL7 or FHIR?

-

How do you approach cloud architecture for regulated workloads?

-

If AI is in scope now or later, how do you validate models, document assumptions, and monitor performance safely?

-

How do you decide whether a feature should be deterministic, AI-assisted, or fully automated?

A partner doing custom healthcare software development should be able to answer these without hand-waving.

Partnership and operating model questions

-

Who exactly will work on our account, and what regulated experience do they have?

-

How do you structure communication, governance, and escalation?

-

What happens after launch? Ask about maintenance, incident handling, and continuity of team knowledge.

-

Where can we review relevant client cases that resemble our delivery context?

If you want one litmus test, use this: ask the partner to walk through a recent project decision that balanced delivery speed against compliance risk. Their answer will tell you whether they think like operators or just implementers.

Bridge Global in Action: Real-World Regulated Solutions

Strong regulated delivery usually starts the same way. The team doesn’t begin with features. They begin with constraints, workflows, data movement, risk categories, and the likely compliance burden those choices create.

That’s the logic behind a consultative approach. Discovery should identify where the product sits in the clinical or operational workflow, what integrations are unavoidable, what evidence the client will need later, and where AI might create new review requirements. From there, architecture and delivery planning become more grounded.

What capable execution looks like

In practice, regulated healthcare work often falls into a few patterns:

-

Telehealth and remote care platforms that need secure identity, session handling, auditability, and integration with existing provider workflows

-

Data exchange solutions that rely on structured interoperability and careful handling of permissions, consent, and traceability

-

Clinical support features with AI components, where validation and explainability need attention early, not after a prototype succeeds

-

Modernization initiatives where legacy systems must be upgraded without breaking operational controls

A useful way to assess a partner is to see whether they can connect discovery decisions to later delivery evidence. That’s where many firms fall apart.

How to validate fit beyond the sales process

Review healthcare-specific work, not just polished general portfolios. The most useful examples explain the problem context, the constraints, the integration complexity, and how the delivery team handled governance, testing, and support after launch.

Bridge Global’s healthcare-specific case portfolio is one place to inspect that kind of work in context. If you’re comparing firms, ask each one for the same level of detail.

As we explored in our guide to evaluating AI-enabled delivery capability, the strongest partner is usually the one that can explain trade-offs clearly. Not the one that promises the fastest start date.

Making Your Final Decision

Your final decision should come down to three things.

First, does the partner have real compliance depth, not just familiarity with healthcare terminology? Second, do they run a disciplined quality and security operation that produces evidence you can use? Third, can they support the roadmap you’ll need next year, especially around interoperability, cloud, and AI?

A regulated healthcare software development partner should lower uncertainty across the entire product lifecycle. That’s the benchmark.

If you’re evaluating options now, be ruthless about proof. Ask for artifacts. Ask for the process. Ask how they handle trade-offs when speed collides with control. The right partner won’t dodge those questions. They’ll welcome them.

Frequently Asked Questions

Do all healthcare software projects need an ISO 13485-certified partner?

No. It depends on the product and its regulatory path. If you’re building software with medical device implications or you expect submission-related scrutiny, ISO 13485 becomes far more important. For other healthcare platforms, it’s still a strong signal of process maturity, but the key issue is whether the partner can demonstrate disciplined quality management in practice.

How do I verify that a partner actually understands HIPAA?

Don’t ask for a yes or no answer. Ask how they handle protected data in architecture, access control, logging, testing, support, and contracts. Ask whether they’re comfortable with BAAs and what operational controls they typically implement. The more specific your questions, the faster weak answers show up.

Should I separate AI work from core regulated product development?

Usually not, if AI is part of the intended product experience or decision flow. Separating the work often creates documentation gaps, validation gaps, and architecture mismatches. You’re better off with one partner or one tightly governed delivery model that treats AI as part of the same controlled system.

What’s the biggest mistake buyers make during vendor selection?

They overweight demos and underweight operating discipline. A polished prototype is easy to fake. Traceability, risk ownership, validation readiness, and secure delivery habits are harder to fake. Those are the things that matter once the project becomes real.

How much post-launch support should I expect?

More than most vendors propose. Healthcare software needs ongoing maintenance, security updates, change assessment, and support for evolving compliance expectations. If the partner has no credible post-launch model, assume the handoff risk is yours.

When should I involve legal, compliance, and security stakeholders?

Early. Bring them in before architecture hardens and before contracts are finalized. Good partners don’t see that as friction. They treat it as part of building a stable delivery environment.

If you’re choosing a Bridge Global engagement for a regulated healthcare product, start with a working session focused on risk, architecture, interoperability, and AI scope. That will tell you quickly whether the delivery model fits your compliance burden, your roadmap, and your margin for error.