Healthcare Software Compliance Engineering: A 2026 Guide

Compliance failures in healthtech rarely start in legal. They start in product decisions, architecture shortcuts, weak access controls, and release pipelines that move faster than the evidence needed to defend them.

That is the context for healthcare software compliance engineering in 2026. Teams are shipping cloud-native platforms, mobile apps, third-party integrations, analytics pipelines, and AI-assisted features across multiple regions. Each new service, model update, and vendor connection can change how regulated data is collected, processed, retained, and exposed. For a CTO, compliance is part of system design and production operations.

The old approach treated compliance as a checkpoint near launch. Legal reviewed policies, security reviewed controls, QA checked test cases, and engineering filled in the gaps later. That approach breaks once teams deploy continuously, rely on global contributors, and introduce AI components that can change behavior without a visible UI change. Static checklists do not keep pace with that delivery model.

The better approach is to build compliance into the AI-enabled SDLC itself. Put requirements into architecture reviews. Turn control expectations into CI/CD gates. Validate model behavior and data use before release. Capture evidence automatically from infrastructure, tickets, tests, and production telemetry. Then keep checking in production, because compliant design at release time does not guarantee compliant operation a month later.

This changes how mature teams make trade-offs. Faster shipping matters, but so does proving who approved a model change, which environments touched PHI, whether audit logs stayed intact, and how a vendor integration was risk-assessed. Good compliance engineering reduces rework because it makes those answers available during delivery, not weeks before an audit.

If you’re building internally or evaluating a long-term healthtech software development partner, use that standard. Ask whether the team can automate evidence collection, monitor control drift in production, and support multiple regulatory regimes without slowing every release to a manual review.

The High Stakes of Healthtech Compliance in 2026

In 2024, healthcare breaches in the US exposed 133 million patient records, up 22% year over year, and led to USD 28.3 million in penalties. IBM’s 2024 analysis also put the average cost of a healthcare breach at USD 9.8 million. Those numbers matter because they change how a CTO should allocate engineering time. Compliance work belongs in architecture, delivery controls, and production monitoring, not in a pre-audit scramble.

By 2026, the harder problem is not reading the rulebook. It is proving, every day, that the product still operates within it.

Healthtech systems now span cloud services, mobile clients, APIs, analytics pipelines, third-party processors, and AI-supported features that can change outputs without a visible UI release. For global teams, that creates a wider failure surface. A model prompt change in one region can affect clinical guidance, logging obligations, retention, or cross-border data handling in another. The legal exposure is real, but the engineering failure usually starts earlier, with poor system boundaries, weak evidence capture, or release processes that treat regulated changes like ordinary feature work.

I have seen the same pattern repeatedly. Teams document controls well enough to pass procurement, then lose confidence once release frequency increases. They cannot answer basic operating questions quickly. Which service touched PHI last week? Which model version generated this output? Was access approved, revoked, and logged correctly across every environment? If those answers take days to assemble, the product is already carrying compliance debt.

That debt gets expensive in ways finance teams notice. Enterprise buyers ask harder questions. Security reviews stall. Incident response takes longer because logs are incomplete or fragmented. Regional expansion slows because nobody trusts the current data map. The 2026 privacy law reckoning will make that gap more visible for companies shipping across jurisdictions with shared platforms and distributed engineering teams.

A practical rule helps here. If a team can ship to production without automatically capturing what changed, what data was affected, who approved it, and whether the control set still passed, the team does not yet have compliance engineering discipline.

The upside is operational, not just legal. Teams that build compliance into the AI-enabled SDLC spend less time reconstructing evidence, less time debating ownership during incidents, and less time retrofitting controls for large customers. They release with clearer guardrails, detect control drift in production, and give leadership a current view of risk instead of a quarterly snapshot.

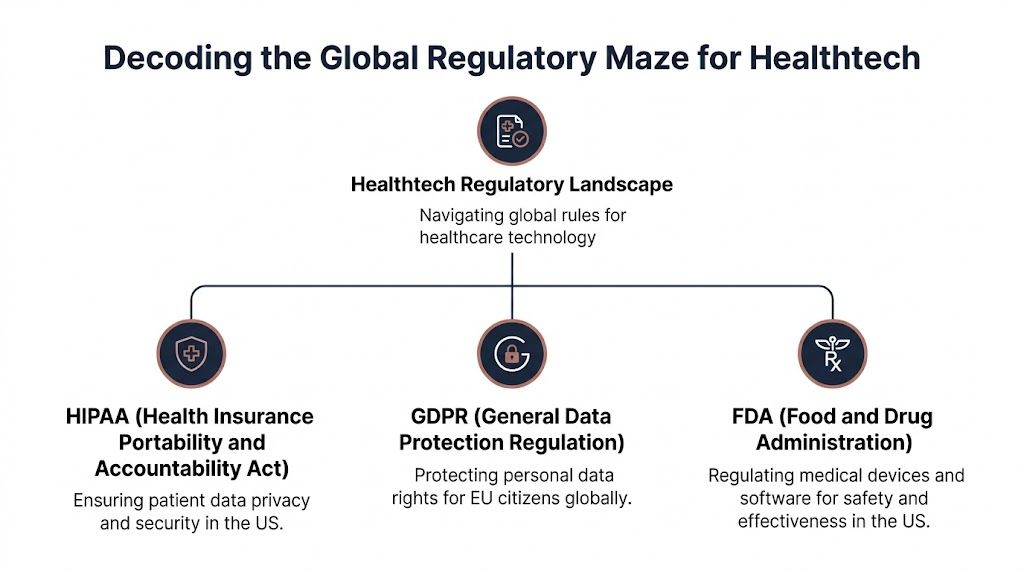

Decoding the Global Regulatory Maze for Healthtech

Regulation feels chaotic when teams read it as a legal stack instead of an engineering stack. Most frameworks ask versions of the same questions. What data are you handling? What can go wrong? What controls exist? What evidence proves those controls work? What happens after release?

For software teams, the key is to convert legal language into build decisions. That applies whether you’re delivering provider-facing tools, patient apps, operational platforms, or custom healthcare software development for regulated buyers.

What each framework means in practice

HIPAA matters when your system handles protected health information in the US healthcare ecosystem. Engineers should read it as an operational requirement for data access, auditability, incident response, vendor accountability, and technical safeguards around ePHI.

GDPR reaches into product architecture faster than many teams expect. If you process personal data tied to EU residents, you need to think about lawful basis, data minimization, retention, transparency, and user rights in the application design itself. Deletion workflows, consent records, export mechanisms, and processor controls can’t be afterthoughts.

FDA oversight for software matters when software functions cross into regulated medical use. At that point, your engineering system needs stronger validation logic, traceability, change control, and evidence around intended use and performance. Product claims become compliance inputs.

MDR and related EU medical device expectations raise similar implementation demands, especially around risk management, documentation, usability, and post-market control.

HITRUST is different. It isn’t a law, but buyers often use it as a trust and control benchmark. In practice, it can shape how enterprise sales cycles evaluate your security and compliance maturity.

Healthtech Regulatory Frameworks at a Glance

| Regulation | Primary Focus | Geographic Scope | Key Software Implications |

|---|---|---|---|

| HIPAA | Privacy and security of health information | United States | Access controls, audit logs, breach response, business associate governance |

| GDPR | Personal data rights and processing controls | European Union, with extraterritorial reach | Consent and lawful basis handling, deletion and export workflows, minimization, processor management |

| FDA | Safety and effectiveness of regulated software and devices | United States | Validation evidence, traceability, change control, documentation tied to intended use |

| MDR | Safety, risk, and lifecycle controls for medical products | European Union | Risk documentation, lifecycle evidence, usability and post-market discipline |

| HITRUST | Certifiable control framework used by buyers | Common in US healthcare procurement | Mapped controls, policy discipline, evidence collection, operational maturity |

The overlap that trips teams up

The mistake isn’t misunderstanding one framework. It’s assuming each framework needs a separate implementation. That creates duplicated controls, fragmented evidence, and conflicting processes.

A better approach is to build a shared control system:

- Identity and access controls that support least privilege across regions and roles

- Logging and audit design that captures both security events and compliance-relevant user activity

- Data lifecycle controls for collection, retention, export, deletion, and vendor transfers

- Validation and release evidence for features that affect care, decisions, or regulated outputs

- Incident workflows that can support different reporting and notification obligations

Teams usually fail here by mapping regulations to documents only. Auditors review documents, but incidents happen inside systems.

A second source of confusion is timing. Regulatory expectations are shifting around AI, privacy, and cross-border processing. For leaders tracking that broader direction, 2026 privacy law reckoning is a useful read because it captures how privacy obligations are tightening beyond narrow sector rules.

The architecture question CTOs should ask

Don’t start with “Which certification should we pursue first?” Start with this: What system of controls can survive product change, international expansion, and new AI features without forcing us to rebuild our platform?

That question leads to better choices. You’ll standardize evidence collection earlier. You’ll define data boundaries before implementation. You’ll reduce the number of exceptions engineers need to memorize. Above all, you’ll stop treating compliance as a separate lane.

Integrating Compliance into the AI-Enabled SDLC

The strongest healthcare teams don’t add compliance after coding. They build release pipelines that refuse non-compliant changes before those changes reach production.

That’s the practical meaning of shift-left compliance. Modern compliance engineering embeds checks directly into the delivery pipeline using SAST, SCA, and IaC scanning tools, which turns compliance from a periodic review into an embedded governance mechanism, as described by Censinet’s guidance on HIPAA compliance in clinical software development.

Discovery and design

Most compliance debt stems from decisions teams make about what data to ingest, where to store it, which vendors to trust, and how AI features will interact with patient or operational data. If those decisions happen informally, every downstream control becomes harder.

At discovery, the engineering lead, product owner, and compliance or security owner should answer a few hard questions:

-

What regulated data enters the system

Define ePHI, personal data, derived data, model inputs, model outputs, and support telemetry separately. If you mix them conceptually, you’ll mix them operationally. -

Which product claims create validation obligations

A workflow assistant is different from a diagnostic recommendation engine. Marketing language can lead to expanded regulatory expectations. -

Where are your trust boundaries

Draw boundaries around cloud services, AI APIs, storage layers, admin consoles, analytics tools, and support access paths. -

What evidence must exist at release

Decide this early. Otherwise teams ship features and scramble afterward for approvals, screenshots, and incomplete test records.

Development and pipeline enforcement

Typically, “compliance by policy” fails. Written standards don’t stop a misconfigured deployment. Pipeline gates do.

A workable CI/CD pattern includes:

- SAST for code analysis to catch insecure patterns before merge

- SCA for dependency review to identify risky open-source components and licensing issues

- IaC scanning to block cloud configurations that violate policy

- Secret detection to stop credentials or tokens from entering repositories

- Build artifact controls so releases can be traced to approved commits and environments

One practical example from shift-left programs is blocking infrastructure templates that attempt to use services without valid Business Associate Agreements before deployment. That’s the type of rule teams remember because the pipeline enforces it consistently.

Build gates should reject non-compliant changes automatically. Engineers shouldn’t need to remember every rule under deadline pressure.

AI-specific checkpoints

AI features need extra discipline because they create compliance exposure in places many teams don’t monitor well. Prompt inputs, output persistence, fallback paths, fine-tuning data, model evaluation sets, and human review queues all create records and decision surfaces.

For AI-enabled healthtech delivery, add these checkpoints:

- Model data review so training, tuning, and evaluation datasets are approved for the intended use

- Prompt and response logging rules that define what can be stored, redacted, or excluded

- Human override design for workflows where AI output influences decisions

- Output classification so downstream systems know whether an AI response is advisory, administrative, or clinically sensitive

- Change review for model updates because a model swap can be a compliance-relevant product change even if the UI looks identical

If your team is operationalizing AI features, our guide on responsible AI is relevant because it connects governance requirements to practical product decisions rather than abstract policy.

Testing and release readiness

By the time a feature reaches staging, most compliance questions should already be answered. Testing then becomes proof, not discovery.

Use a release checklist that combines engineering and governance evidence:

| Release area | Evidence to verify |

|---|---|

| Security controls | Scan results reviewed, exceptions approved, secrets handled correctly |

| Data handling | Data flow matches approved design, storage and transfer paths validated |

| Access model | Role permissions tested, privileged paths reviewed |

| Logging | Audit events generated for sensitive actions and admin activity |

| AI behavior | Evaluation documented, output constraints checked, fallback behavior verified |

| Vendor use | Third-party services match approved contracts and processing scope |

Post-release handoff

A release isn’t complete when the code is deployed. It’s complete when monitoring, alerting, ownership, and evidence retention are all active.

That means defining who reviews alerts, who signs exceptions, who tracks policy drift, and how release evidence is stored for later audits. Without that handoff, even a clean pre-release process degrades fast in production.

Practical Risk Management and Documentation Practices

Risk management in healthcare software works best when it’s painfully concrete. Broad statements like “unauthorized access” or “data issue” don’t help engineers build controls. A useful risk program names the asset, the failure mode, the likely trigger, the affected user, the safeguard, the owner, and the proof.

That’s also why documentation matters. Not because auditors like paperwork, but because regulated systems need evidence that design decisions were deliberate and traceable. For teams delivering custom software development in healthcare, undocumented decisions almost always become rework later.

Build a living risk register

A risk register should live alongside product delivery, not inside a forgotten compliance folder. It needs to change when architecture changes, vendors change, features change, or AI behavior changes.

A useful register usually includes:

- Risk statement with a specific failure mode

- Affected asset or workflow

- Potential impact on privacy, security, safety, or operations

- Current controls

- Residual risk judgment

- Owner and review date

- Linked evidence such as test results, design decisions, or approved exceptions

If the same risk appears repeatedly across teams, create a reusable control pattern. Don’t make every squad rediscover the same safeguards.

Defense in depth for ePHI

Healthcare software needs layered safeguards, not one strong control and a lot of assumptions. Effective technical safeguards require a defense-in-depth architecture covering encryption in transit and at rest, role-based access controls, and detailed audit trails. The Technource HIPAA compliance checklist also notes that the HITECH Act holds software vendors directly accountable for violations, which is why coordinated provider-vendor risk management matters.

In practice, that means engineering teams should verify three control layers together:

| Control layer | What to implement | What to prove |

|---|---|---|

| Data protection | Encryption in transit and at rest | Keys, transport protections, storage handling |

| Access control | RBAC, privileged access restrictions, access reviews | Role definitions, approval records, access test results |

| Observability | Audit logs for reads, writes, exports, and admin actions | Log coverage, retention, alerting, review workflows |

Audit tip: “Minimum necessary” isn’t just an access policy statement. It has to show up in permission design, screen design, APIs, and log review.

Documentation that survives an audit

A lot of teams create documents for milestones and then never maintain them. That’s worse than having fewer documents, because stale documents create contradictions.

The core set usually includes:

- Architecture decisions that show where regulated data flows and why

- Risk assessments tied to actual features and environments

- Requirements and traceability records for regulated functionality

- Validation evidence for security, critical workflows, and AI behavior where applicable

- Change records and exception approvals

- Design history evidence when the product falls under device-style scrutiny or stronger lifecycle expectations

The point isn’t to maximize paper. The point is to create a record that explains why the system looks the way it does and proves the controls still match reality.

Choosing Your Tooling and Automation Stack

Tooling only helps when it fits your control model. Buying five security platforms and one governance dashboard doesn’t produce healthcare software compliance engineering on its own. It often produces duplicate findings, noisy alerts, and engineers who stop paying attention.

A better stack is opinionated. It maps tools to delivery stages, evidence requirements, and ownership boundaries. If you’re adding AI to the mix through internal builds or AI development services, the stack also has to handle model-related governance, not just code scanning.

What belongs in the core stack

Five categories of tooling are typically required:

-

Code security tools

SAST platforms catch insecure coding patterns before release. They’re useful, but only if teams tune rules and suppress noise responsibly. -

Dependency governance

SCA tools matter because regulated products rarely run on first-party code alone. Dependencies create security, licensing, and patching risk. -

Infrastructure policy enforcement

IaC scanning tools stop drift before deployment. In healthtech, these are often more valuable than late-stage environment reviews because cloud misconfiguration is a repeat offender. -

Cloud and runtime visibility

You need signals from real environments, not just repositories. Runtime monitoring, identity analytics, and configuration monitoring are what catch drift after release. -

Governance and evidence systems

Platforms like Drata and Vanta can help centralize evidence collection and audit workflows when integrated well. Teams also explore focused directories like Workwise Compliance when comparing operational compliance tools and workflows.

AI is useful and risky at the same time

Automation via compliance software can reduce manual errors by up to 40%, but AI integration can also create compliance debt if it isn’t designed in from discovery. Generative AI workflows can introduce unlogged data flows, and 80% of current compliance guides don’t address that gap, according to CodeBridge’s healthcare software engineering article.

That trade-off changes how I evaluate tools. I don’t ask only whether a tool automates review. I ask whether it preserves traceability. Can it show why a decision was made? Can it prove what data was processed? Can it separate suggestions from approvals?

What works and what doesn’t

What works

- Toolchains that feed findings into pull requests, release approvals, and ticketing systems

- Narrow, enforceable policies with named owners

- One source of truth for exceptions and evidence

- Cloud governance patterns aligned with production architecture

What doesn’t

- Standalone scanners no one reviews

- AI assistants plugged into regulated workflows without logging rules

- Security dashboards that don’t map to release decisions

- Governance programs with no runtime feedback

For teams modernizing cloud controls, our guide on governance in the cloud is worth reading because cloud posture and compliance posture are usually the same operational problem.

One practical option in this space is to combine established scanners and governance tools with implementation support from providers offering cyber compliance solutions, product engineering services, or a structured ai transformation framework. The value isn’t the label. It’s whether the stack is wired into delivery and evidence generation.

KPIs for Audit Readiness and Continuous Monitoring

Production drift breaks compliance faster than failed paperwork. In healthtech, the gap between an approved release and the system patients, clinicians, and support teams use can open within hours if access rules change, models are updated, or a regional deployment skips a control.

That is why audit readiness has to be measured in production, not inferred from pre-release reviews. For AI-enabled products built by global teams, static checklists are too slow. The control set has to keep watching after deployment, and the evidence has to be generated continuously across code, infrastructure, access, data handling, and model operations.

KPIs that actually predict audit pain

Useful compliance KPIs show whether controls are holding under real operating conditions. They should help an engineering leader answer three questions quickly. What drifted, who owns it, and how long has it been exposed?

I usually recommend a small set of operational metrics tied to release decisions and runtime behavior:

- Mean time to remediate compliance-relevant security findings

- Open policy exceptions, grouped by severity, owner, and age

- Coverage of audit logging for sensitive user and admin actions

- Privileged access review completion rate

- Production configuration drift events against approved baselines

- Release evidence completeness for regulated changes

- Time to triage and close compliance-relevant alerts

- Model or ruleset changes deployed without linked approval records

The last metric matters more now than it did two years ago. Teams are shipping AI-assisted features, prompt changes, model configuration updates, and automated decision support outside the older application release rhythm. If those changes are not tied back to approvals, validation records, and monitoring thresholds, the audit trail breaks even when the feature works as intended.

Some common metrics are still useful for governance, but they are weak leading indicators. Training completion rates and policy acknowledgments do not tell a CTO whether production access has drifted in Singapore, whether an EU data flow changed, or whether an LLM-enabled workflow started processing data outside its approved scope.

What good monitoring looks like in practice

A workable pattern is a single operational view that pulls evidence from CI/CD, cloud posture tools, IAM, ticketing, logging, and incident response. The dashboard itself is not the control. The control is the combination of detection logic, ownership, escalation rules, and a record of what happened next.

For example, a support escalation grants temporary object storage access to resolve a customer issue. The ticket is valid. The engineer is authorized. The problem starts when the temporary role remains active after the incident closes, or when the access scope exceeds the approved support baseline in one region but not another. A runtime rule should catch that change, attach it to the ticket and identity record, alert the owner, and start the remediation clock automatically.

That kind of workflow is what separates continuous compliance from dashboard theater.

For teams aligning healthtech controls with broader assurance work, the Bridge Global guide to SOC 2 compliance requirements is a useful reference for mapping evidence, monitoring, and control ownership across audits.

Leaders who want to benchmark these operating patterns against shipped programs can also review relevant client cases. The value is not the presentation. It is seeing how release evidence, runtime monitoring, and exception handling are wired into day-to-day delivery.

Building Your Compliance-First Engineering Culture

Compliance culture shows up in ordinary delivery decisions. It shows up in who can merge a pull request, how teams review AI features, who owns data retention rules, and whether production exceptions are treated as engineering work or paperwork for later.

CTOs usually see the culture problem when one team ships fast and another team has to clean up the evidence trail, privacy gaps, or regional control mismatch. That operating model fails in healthtech. Engineering, security, product, QA, and operations need one definition of done that includes control design, testability, and audit evidence. Otherwise, global teams create local shortcuts, and those shortcuts surface during customer security reviews, regulator questions, or incident response.

A good compliance-first culture reduces rework because teams make the hard calls earlier. They classify data before building AI features on top of it. They decide which prompts, outputs, and model interactions belong in logs, and which do not. They document intended use before a feature drifts into territory that changes its regulatory profile. Those choices slow one sprint and save months later.

For distributed organizations, the practical question is governance. A well-run dedicated development team can build regulated software effectively if the team works inside the same controls as everyone else. That means shared pull request standards, common threat and privacy review criteria, one exception process, and the same release evidence expectations across regions. If offshore engineers write code while a headquarters compliance group tries to add controls after the fact, the result is predictable. Missing context, weak traceability, and expensive remediation.

The AI piece changes the culture requirement. Static checklists are not enough once teams use copilots, third-party models, automated test generation, or production monitoring that triggers policy decisions. Teams need habits that support continuous compliance in the live system. Engineers should expect policy checks in CI, runtime alerts tied to owners, and periodic review of model behavior, data flows, and vendor changes as part of normal operations.

The goal is simple. Build a team that treats compliance as part of shipping quality software, not as a separate approval function. Those teams release with fewer surprises, handle audits with less disruption, and scale into new markets without rebuilding the delivery system each time.

Frequently Asked Questions

How early should compliance work start in a healthtech product

It should start in discovery. Data classification, intended use, vendor choices, AI workflow design, and access models all create downstream obligations. If you wait until QA or pre-launch review, the team usually ends up redesigning storage, logging, permissions, or documentation under pressure.

How should offshore or distributed teams handle healthcare software compliance engineering

Use one delivery system with shared controls. That means the same backlog definitions, pull request standards, evidence rules, release criteria, and risk register across locations. Distributed teams fail when one group writes code and another group tries to “add compliance” later. They succeed when compliance tasks are part of normal engineering work.

What’s the biggest compliance mistake with generative AI in healthcare software

Untracked data flow. Teams add prompts, assistants, summarization, or copilots without deciding what gets logged, retained, redacted, reviewed, or sent to third-party services. That creates exposure fast. Treat prompt paths and model outputs as first-class data flows.

Do small and mid-market healthtech companies need the same controls as large enterprises

Not always the same implementation depth, but the same core questions apply. You still need risk-based safeguards, access control, auditability, and evidence that your controls work. The difference is usually tooling complexity and process scale, not whether the controls matter.

What should a CTO ask a delivery partner before starting a regulated healthcare build

Ask how they handle data flow mapping, CI/CD compliance gates, cloud configuration review, audit logging, validation evidence, AI model governance, and production monitoring. Ask to see how exceptions are approved and tracked. Ask how they work when requirements change mid-project. If the answers stay abstract, the delivery model probably isn’t ready for regulated healthtech.

If you’re building a regulated product and need a delivery model that treats compliance as part of engineering, not a post-launch cleanup exercise, Bridge Global can help you structure the work correctly from discovery through production. That can include platform design, AI governance, secure delivery workflows, and the practical controls needed for modern healthcare software compliance engineering.