Optimize Digital Health Software Engineering Services

Healthcare organizations are increasing digital spend fast, but buying engineering capacity is still where many teams make expensive mistakes. The problem is rarely a missing feature. It is poor operational fit between product architecture, compliance controls, integration work, and the engagement model used to deliver all of it.

That is the lens that matters when evaluating digital health software engineering services. AI features, cloud infrastructure, interoperability, cybersecurity, quality systems, and delivery staffing do not operate as separate workstreams in a real healthtech environment. They interact every week. A decision to add an AI triage layer can change PHI handling, audit logging, validation scope, model monitoring, and the type of engineers you need on the team. The same pattern is playing out across remote care, patient apps, clinical workflows, and even human trials for AI-powered drugs.

I have seen strong product teams lose months because they picked a vendor that could build screens and APIs, but could not handle HIPAA constraints, data mapping with EHRs, release documentation, or on-call accountability after launch.

A capable engineering partner ships software that works inside the operating reality of healthcare. That means clean integrations, traceable security controls, predictable delivery, and code a second team can maintain without a rescue project six months later.

The Digital Revolution in Healthcare Is Here

The growth numbers are large, but the more useful signal is what they represent. Digital health is no longer funded as an experiment at the edge of the business. It is being treated as operating infrastructure for care delivery, patient engagement, reimbursement, and internal efficiency, as noted earlier in the market data.

Healthcare organizations now expect software to carry production responsibility. Patient portals, remote monitoring, care coordination tools, workflow automation, digital therapeutics, and AI-assisted clinical systems increasingly sit inside daily operations. Once a product reaches that point, engineering quality starts affecting staffing pressure, compliance exposure, revenue capture, and clinical trust.

Why demand keeps accelerating

Demand is rising because healthcare buyers are trying to solve operating problems, not because digital has become fashionable again.

Patients expect responsive apps, remote access, and fewer breaks between channels. Clinical and administrative teams want less duplicate entry, fewer phone-based workarounds, and cleaner handoffs across systems. At the same time, cloud platforms, modern APIs, connected devices, and applied AI have made more of these workflows feasible to build and maintain.

Wearables are a good example. Smartwatches, fitness trackers, and medical-grade sensors can feed remote monitoring programs, but only if the engineering work covers device data quality, alert routing, identity management, consent, and documentation. Without that operational layer, a connected device is just another noisy input.

Why generic engineering isn’t enough

A vendor can be strong at web delivery and still be the wrong fit for healthtech. The difficult part in healthtech is not shipping a feature into production. It is shipping software that can handle PHI correctly, integrate with EHRs and partner systems, support audit trails, survive security review, and remain maintainable after the initial release.

That is why service scope matters as much as technical skill. AI work changes data classification, retention rules, monitoring duties, and validation scope. Interoperability work affects release planning and support ownership. A dedicated team model can improve velocity, but only if the team also includes the security, QA, DevOps, and compliance coordination needed to run a regulated product. In practice, these decisions rise or fall together.

The same pattern is visible across the frontier of the industry. If you are tracking what comes next, the progress in human trials for AI-powered drugs shows how quickly software, data, and regulatory discipline are becoming intertwined.

A credible partner brings those threads together in delivery. Teams offering custom software development for healthcare products should be able to explain how architecture, compliance controls, integration planning, and engagement structure will work as one operating model, not as separate promises in a sales deck.

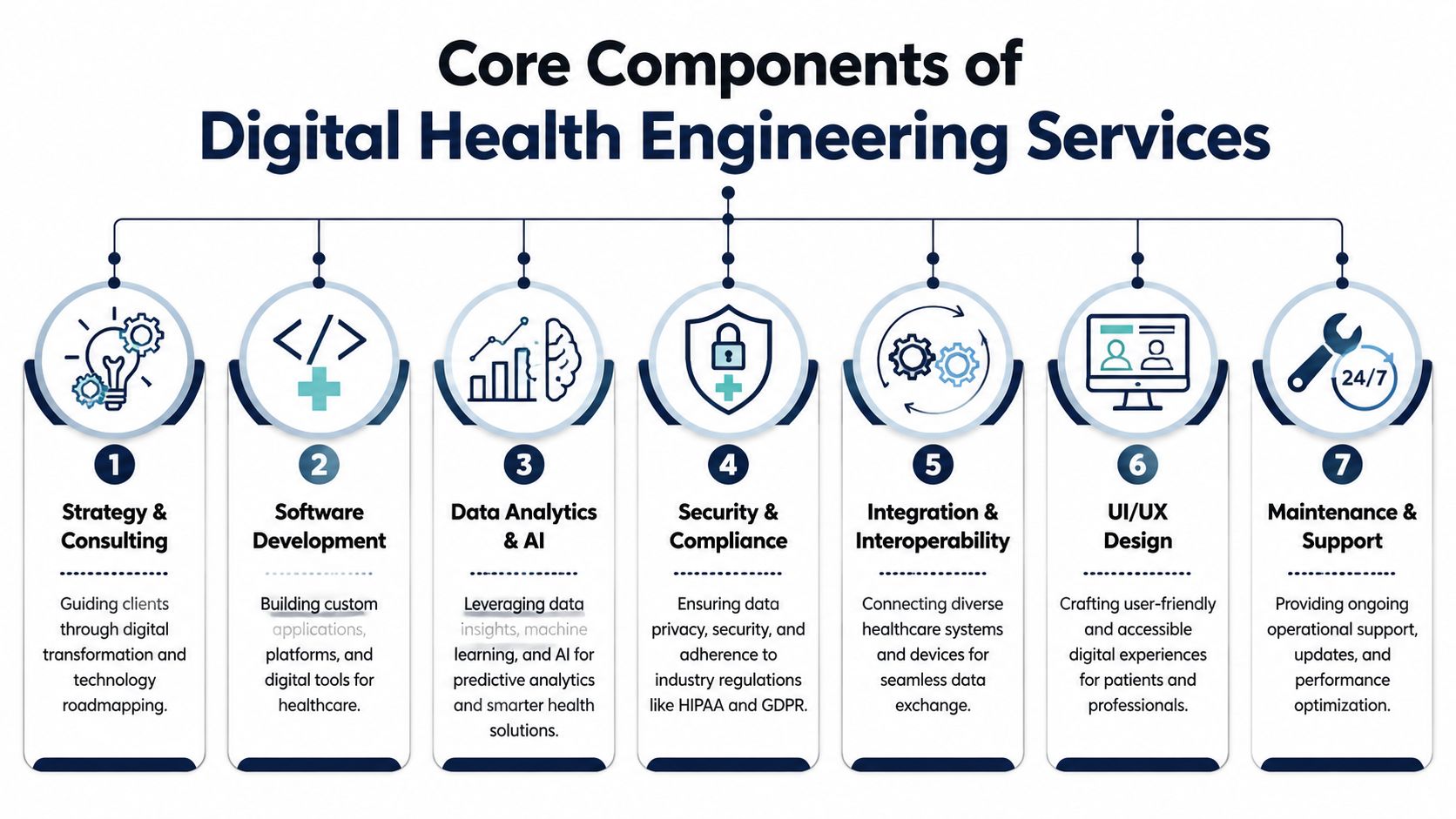

Core Components of Digital Health Engineering Services

Products that succeed in digital health usually win on operations, not feature count. The engineering partner matters because every core service, AI, interoperability, cloud, QA, UX, and support, has to fit the way a regulated product is built, released, monitored, and improved.

AI and data services tied to real workflows

AI work in healthcare earns its keep only when it removes friction from a defined workflow. That could mean clinical summarization that cuts chart review time, triage support that routes cases faster, anomaly detection that flags risk earlier, or patient engagement logic that improves follow-through. Teams that start with the model instead of the workflow usually create validation, monitoring, and liability problems that they did not price into the project.

This is also where engineering scope and compliance start to merge. Model training changes what data is collected, how long it is retained, which environments can access it, and what audit evidence the product team needs later. If a partner cannot explain model governance, human review points, fallback behavior, and data provenance in operational terms, the AI offering is still at a slide-deck stage.

Vendor due diligence extends beyond your direct development partner. If AI features rely on analytics providers, annotation firms, model APIs, or third-party data vendors, those dependencies need review for security, contractual exposure, and data handling fit.

Cloud and DevOps, built for controlled delivery

Health platforms need scalable infrastructure, but they also need disciplined releases. I look for teams that treat cloud architecture as part of quality management, not a hosting decision.

A capable partner should be able to show how they set up environment segregation, deployment approvals, access controls, backup and recovery, and infrastructure versioning. Those choices affect release predictability, incident response time, and the effort required to pass customer security review. They also affect cost. Heavy environment sprawl improves control, but it can raise hosting and maintenance overhead, so the right design depends on product risk and customer expectations.

Good DevOps in healthtech reduces surprises. It limits configuration drift, makes failures easier to trace, and gives product teams clearer evidence when they need to explain what changed, when, and why.

Interoperability that can survive production reality

Many vendors say they support healthcare integration. The useful question is whether they can keep integrations stable after go-live.

Interoperability work includes data mapping, terminology handling, patient identity resolution, consent boundaries, version drift, retry logic, and exception workflows for bad or incomplete records. FHIR helps, but FHIR by itself does not solve the operational mismatch between systems. I have seen projects stall because the partner understood the resource model yet had no plan for reconciling edge cases across scheduling, medication, and encounter data.

Strong teams explain the support model up front. Who owns failed message triage? Who updates mappings when a partner system changes? How is integration quality monitored in production? Those answers matter more than a generic claim about APIs.

Security engineering inside the delivery model

Security work should be visible in architecture, backlog design, code review, testing, and support procedures. If it appears only as a pre-launch checklist, the partner is asking you to absorb avoidable risk later.

That is why the delivery process matters as much as the code itself. A disciplined approach to custom healthcare software development services should show how secure coding, auditability, access control, and release gates are built into day-to-day execution. In practice, this affects velocity in a good way. Teams with clear controls spend less time reopening preventable issues during enterprise procurement, penetration testing, or integration rollout.

UX that fits clinical and patient behavior

Adoption problems often look like product issues, but they start as workflow mismatches. A patient app that assumes high literacy, a clinician screen that hides context, or an operations console that buries exceptions will create workarounds fast.

Good healthtech UX work accounts for interruptions, shared devices, caregiver participation, accessibility needs, and inconsistent connectivity. It also recognizes that each user group measures success differently. Clinicians want fewer clicks and clearer context. Patients want trust, clarity, and simple next steps. Operations teams want visibility into failures before they become support tickets.

QA and validation matched to product risk

Testing depth should follow product risk, integration complexity, and data sensitivity. A symptom checker, remote monitoring platform, internal care ops tool, and patient billing workflow do not need the same validation plan.

This is the conversation worth having with a vendor:

| Area | Weak answer | Strong answer |

|---|---|---|

| Interoperability | "We can connect via API" | "We model with FHIR resources, validate mappings, and test terminology consistency" |

| Security | "We follow best practices" | "We embed security scanning, role controls, auditability, and release gates in delivery" |

| UX | "We make it intuitive" | "We test against clinician, patient, caregiver, and admin workflows" |

| Maintenance | "We offer support" | "We define ownership for monitoring, patching, integration drift, and roadmap updates" |

The through-line is operational integration. Core services are not separate boxes to buy one by one. AI affects compliance scope. Interoperability affects support ownership. DevOps affects audit readiness. QA affects release confidence and enterprise sales. The right partner can explain how those pieces work together before the contract is signed.

The Regulatory Gauntlet Ensuring Compliance in Healthtech

Most healthtech delays don't happen because a team can't code. They happen because someone treated compliance as documentation instead of engineering. In digital health software engineering services, regulation isn't a side lane. It directly shapes architecture, release management, vendor selection, and support obligations.

What compliance changes in the codebase

Teams often talk about HIPAA, GDPR, HITRUST, or device standards as if they're labels you apply after launch. That isn't how this works. Compliance affects data minimization, storage design, role-based access, consent handling, encryption choices, logging, retention rules, and the way environments are segmented.

For software that may fall under medical device expectations, lifecycle discipline becomes even more important. According to Tata Elxsi on healthcare software engineering, integrating SAST and penetration testing within CI/CD pipelines can detect up to 92% of vulnerabilities before deployment, and breaches cost U.S. providers an average of $10.1M per incident. The point isn't the tooling alone. The point is that secure delivery has to be embedded in the pipeline, not deferred to a last-minute review.

Where buyers underestimate risk

The weak spots are usually operational.

-

Third-party dependencies create hidden exposure if libraries, APIs, and subprocessors aren't reviewed carefully.

-

Data sharing assumptions break down when business teams promise integrations before engineers map the compliance implications.

-

Support processes fail when nobody owns patching, audit evidence, access reviews, or incident handling after go-live.

If you want a practical example of how careful organizations document vendor exposure, Numeric's public view of third-party data vendors is worth reviewing. It's not a healthcare template, but it shows the level of clarity buyers should expect when vendors handle sensitive data.

Why generic vendors struggle here

A generalist dev shop may build competent software and still create unacceptable healthtech risk. Common warning signs include shallow answers on audit logging, no clear policy on business associate obligations, vague security testing language, or an inability to explain how release controls interact with regulated workflows.

Compliance problems usually start as architecture shortcuts. They only look like legal problems later.

A healthcare-aware engineering partner should be able to discuss threat modeling, role boundaries, encryption in transit and at rest, least-privilege access, evidence capture for audits, and how security controls affect delivery cadence. They should also be comfortable showing how those controls fit the SDLC instead of treating them as external blockers.

For teams that need a more structured view of balancing speed with governance, this guide on digital health speed and compliance is relevant because it frames compliance as a delivery design problem, not just a legal review item.

What good looks like

You don't need a vendor with the longest certification list. You need one with disciplined habits.

Look for these signals in discovery conversations:

-

They ask about data flows early, not after scoping is done.

-

They separate risk by workflow because patient messaging, clinical support, billing, and analytics don't carry the same implications.

-

They document security controls in operational terms, including who monitors, who approves, and who responds.

-

They can explain trade-offs, such as where stricter controls add friction and where that friction is justified.

That's what reduces audit pain and lowers the odds of expensive surprises.

Structuring Your Partnership Engagement and Pricing Models

Commercial structure decides whether a capable engineering team produces momentum or procurement friction. In healthtech, the wrong model usually shows up first as delivery noise, then as missed releases, compliance rework, and budget arguments. The partner is not just supplying developers. They are joining an operating system that has to absorb clinical feedback, security review, integration work, and audit pressure without stalling.

I look at engagement models through one question: who carries delivery risk as the product changes?

Fixed price works for bounded work, not evolving products

A fixed price can work well when the work package is narrow and testable. A contained integration, a redesign of a patient intake flow, a defined remediation effort, or a short MVP with explicit acceptance criteria can fit this model.

The problem starts when uncertainty is real, and everyone pretends it is not.

If your team is still validating provider workflows, resolving what data can move across systems, or deciding how patient consent should affect product behavior, a fixed price creates the wrong incentives. The vendor protects the scope. Your team pushes for necessary changes. Every decision turns into a change request instead of a delivery decision. In regulated products, that tension gets worse because compliance and security findings often reshape implementation details after discovery begins.

Time and materials fit learning cycles, if governance is strong

Time and materials is usually the better fit for products that are still finding their shape. That includes care coordination platforms, remote monitoring products, internal clinical tools, and analytics products, where user behavior will change the roadmap quickly.

The trade-off is management overhead. T&M gives you flexibility, but it also exposes weak operating discipline. Without clear backlog ownership, release criteria, and escalation paths for clinical and legal questions, you are paying for activity rather than progress.

Teams that use T&M well usually have three things in place:

-

A named product owner who can make priority calls quickly

-

Weekly delivery visibility on burn, risks, and release confidence

-

Clear decision rights across product, engineering, security, and compliance

That operating model matters as much as the rate card. A partner that can build AI-assisted workflows or interoperability layers still becomes expensive if they cannot show how scope changes are evaluated, approved, and documented.

Dedicated teams often make the most sense for long-lived platforms

For products expected to run and evolve over multiple release cycles, continuity usually beats transactional staffing. A dedicated team keeps the domain context in place. That matters in healthcare because product knowledge is tied to workflow exceptions, integration behavior, quality controls, and the history behind compliance decisions.

The commercial model has a direct connection to risk control. A stable team is more likely to understand why a triage rule was implemented a certain way, how PHI is handled across services, what evidence an audit trail needs to retain, and which integration failures create downstream operational issues for clinicians or support staff. Rebuilding that context repeatedly is expensive.

Digital transformation consulting can be useful at the start, but many organizations eventually need a standing team that owns ongoing delivery, platform hardening, support transitions, and selective modernization. That is often the point where dedicated capacity stops looking like a premium and starts looking like cost control.

Hybrid models match how healthtech products actually mature

A single engagement model rarely fits the full product lifecycle. Early work may begin with short discovery and architecture planning. A defined first release may be priced as a scoped project. Once the platform proves demand and integration complexity becomes clearer, the relationship often shifts to a retained team.

That progression is healthy. It reflects how risk changes over time.

The key is to assess whether the partner can operate across those phases without dropping quality. If they sell strategy separately from delivery, ask who owns the handoff. If they offer a dedicated team, ask how they preserve system knowledge, maintain release discipline, and keep compliance artifacts current as the roadmap shifts. If AI is on the roadmap, ask how model work, data governance, validation, and HIPAA obligations are handled inside the same delivery process rather than as a side stream.

A practical way to frame the choice:

| Situation | Better fit | What to watch |

|---|---|---|

| Well-defined work with stable requirements | Fixed price | Change control can overwhelm delivery if discovery is incomplete |

| Product development with active learning and stakeholder input | Time and materials | Weak governance turns flexibility into cost drift |

| Ongoing platform with releases, support, integrations, and compliance upkeep | Dedicated team | Team continuity only pays off if ownership and metrics are clear |

The best model is usually the one that matches your uncertainty, your internal decision speed, and the operational burden of the product after launch. Cheap on paper is often expensive in production.

Building a Future-Proof and Scalable Tech Stack

A future-proof healthtech stack isn't the one with the most modern logos. It's the one that supports your clinical and operational reality without making later changes prohibitively expensive. Teams get into trouble when they optimize for speed in one dimension and inherit fragility everywhere else.

Start with the product shape

A remote patient monitoring platform, an internal clinical workflow app, and a payer analytics tool shouldn't share the same stack by default. Each product has different latency needs, user profiles, integration patterns, and risk concerns.

For example, a clinician-facing dashboard often benefits from a frontend stack that handles dense state and complex component behavior well. A data-heavy backend may lean on Python where analytics and AI workflows matter. A mobile patient app may favor frameworks and offline behavior that support uneven connectivity and broader device variability. The stack should follow the workflow, not the other way around.

Design around interoperability early

In healthcare, architecture decisions tend to age badly when teams bolt interoperability on after core product development. If the platform will exchange records, lab results, medication data, encounters, or patient-generated health data, the data model and API design need to reflect that from day one.

That means asking early questions like:

-

Will FHIR be the system contract or an adapter layer

-

Where does terminology normalization happen

-

How will identity, consent, and audit events move across services

-

What breaks if an external system changes the payload structure or timing

A future-proof stack isn't just scalable under load. It's resilient under integration change.

If your architecture can't tolerate external system inconsistency, it isn't mature enough for healthcare.

Cloud decisions need legal and operational input

AWS, Azure, and GCP can all support serious healthcare workloads. The right choice often comes down to the specific services you need, your team's operating familiarity, regional data considerations, existing enterprise contracts, and how cleanly the platform can enforce security controls.

The mistake is delegating the cloud decision entirely to engineering or entirely to procurement. Product, legal, security, and platform owners all need a say. The choice affects not just hosting, but observability, IAM patterns, data services, CI/CD design, and disaster recovery processes.

AI adds another architecture layer

If AI is part of the roadmap, don't isolate it as a lab experiment. Treat it like a product capability with governance requirements. You need clarity on where inference runs, how prompts or model inputs are logged, how sensitive data is handled, what human review exists, and how drift or quality degradation will be monitored over time.

That's where an AI transformation framework can be useful. It forces the organization to connect model choices, business goals, security expectations, and delivery process before AI gets embedded across products.

One practical note on vendor evaluation. Bridge Global positions its work around AI-driven software development and digital transformation, including healthcare-relevant engineering capabilities such as compliant platform work, integrations, cloud enablement, QA, and full-stack delivery. That's one example of the kind of profile to compare against other partners. The point isn't brand selection. It's whether the partner can align stack decisions with regulated operations.

From Blueprints to Breakthroughs: Case Studies and Outcomes

A launch can hit every sprint goal and still fail the business six months later. In digital health, the outcome that matters is operational adoption under compliance constraints.

Case studies are useful only if they show how engineering, compliance, and day-to-day operations worked together after go-live. I look for three signals. Did the product fit an actual clinical or administrative workflow? Did the delivery model support auditability and change control? Did the technical choices reduce cost, delay, or support burden in a measurable way?

Outcome pattern one. Adoption comes from workflow fit

Teams often overrate feature count and underrate operational friction. A patient app, virtual care workflow, or provider dashboard gets traction when it respects how people already work and improves one painful step without creating two new ones.

A credible case in this category usually looks like this:

-

Problem: Follow-up after appointments was inconsistent. Staff switched between phone calls, portal messages, and email, with no reliable view of status.

-

Engineering response: The partner built a communication workflow tied to role-based access, consent rules, escalation paths, and clear ownership states for front-desk and care teams.

-

Business outcome: Staff spent less time chasing patients manually. Managers could see bottlenecks. Patient outreach became easier to track and defend during reviews.

The value is operational. The software became part of the care coordination process instead of another tool staff had to remember to open.

Outcome pattern two. Efficiency usually comes from integration discipline

Healthcare efficiency gains rarely come from a new interface alone. They come from fewer handoffs, less duplicate entry, and cleaner system behavior across scheduling, billing, EHR, CRM, and patient engagement tools.

Here is the pattern I expect to see in a strong case study:

| Problem | Engineering response | Business effect |

|---|---|---|

| Staff re-enter the same data in multiple systems | Build tested integrations, normalize payloads, and define data ownership between systems | Lower admin effort and fewer downstream errors |

| Operations teams do not trust reports | Standardize event capture, validation rules, and reporting logic | Faster decisions and fewer reconciliation drills |

| Support tickets rise after launch | Add monitoring, alerting, runbooks, and named ownership for fixes | Lower support cost and more predictable service levels |

This is also where service scope and engagement model start to matter. A dedicated team that owns integration quality, release coordination, and post-launch defect patterns will usually produce better long-term results than a project team measured only on initial delivery.

Outcome pattern three. Compliance work can improve release speed

Some of the best healthtech outcomes start with cleanup, not net-new features. I have seen products stall because access controls were inconsistent, audit logs were incomplete, and release approvals lived in email threads. Every change created rework for legal, security, and operations.

A capable engineering partner treats that as a delivery problem, not just a security problem. They tighten identity and access patterns, make audit evidence easier to retrieve, and put release controls inside the development process. If AI features are in scope, they also define how protected data is handled in prompts, logs, model outputs, and human review steps. That connection matters. AI capability without compliance discipline creates risk, and compliance work without product alignment slows the roadmap.

The result is usually less dramatic than a flashy product launch, but more valuable. Releases move with fewer approval delays. Support escalations drop. Teams spend less time reconstructing what happened during an incident or audit.

For examples of this operating model in practice, review these healthcare-focused client cases and delivery outcomes. The point is not polished screenshots. It is evidence that the partner can connect engineering services, regulated workflows, and engagement structure to results that the business can sustain.

Your Vendor Evaluation Checklist

A good vendor call should feel a little uncomfortable for the vendor. If every answer sounds smooth and broad, you probably aren't asking questions that expose delivery risk. The right checklist forces specificity.

Technical and domain questions

Ask these early, before pricing discussions get too far along.

-

How have you handled FHIR-based integrations in production-like environments?

Listen for concrete discussion of resource modeling, mapping, terminology, and version handling.

-

What healthcare workflows have you built for clinicians, patients, caregivers, or operations teams?

You want domain familiarity, not just a generic app experience.

-

How do you approach AI features in regulated contexts?

Strong teams discuss boundaries, human oversight, and data handling, not just model selection.

Security and compliance questions

Weak vendors often become vague at this point.

-

Will you sign the appropriate healthcare data agreements and document responsibilities clearly?

-

How do you embed security testing in delivery, not just before launch?

-

What logs, evidence, and approval records do you maintain for audit support?

Ask who owns patching, access reviews, vulnerability response, and incident communication after go-live. If ownership is fuzzy, the risk is real.

Process and delivery questions

The point here is to test whether the team can operate under real constraints.

-

How do you run discovery when product, legal, security, and clinical stakeholders disagree?

-

What does your release process look like for a healthcare product with sensitive data?

-

How do you manage backlog changes without losing control of the budget or validation effort?

Team and communication questions

Don't just ask for resumes. Ask how the team works.

| Area | Question to ask | What a solid answer sounds like |

|---|---|---|

| Team structure | Who is on the team, and who stays through later phases | Named roles, continuity plan, clear ownership |

| Escalation | What happens when blockers affect timeline or compliance | Defined path, not improvised heroics |

| Product collaboration | Who translates between business and engineering | Someone explicitly owns that bridge |

Portfolio and proof questions

Finish with the evidence.

-

Can we speak with a relevant healthcare client or review comparable work?

-

Can you show examples of maintenance, not just launch stories?

-

What did you learn from a healthcare project that went off track, and what changed afterward?

That last question is underrated. Mature partners can describe mistakes without hiding behind abstractions.

Frequently Asked Questions About Digital Health Engineering

How long does it take to build a digital health product?

Healthcare teams usually underestimate timeline risk in integrations, validation, and operational handoffs, not in feature coding. A focused MVP may ship in months. A product that includes EHR connections, role-based access, audit logs, patient messaging, analytics, and production support readiness takes longer because each layer affects security review and release control.

The useful question for vendor selection is not “How fast can you build it?” Ask, “What can you ship safely in release one without creating rework in compliance, data architecture, or clinical workflow later?”

What hidden costs should buyers expect?

The overruns usually come from work around the product, not the interface itself. Common examples include data cleanup before migration, API inconsistency across partner systems, security hardening, test evidence for regulated releases, cloud cost tuning, and internal review time from legal, privacy, compliance, and clinical leaders.

I look for proposals that make these costs visible early. If an engineering partner treats AI, integrations, and QA as separate workstreams with no shared plan for HIPAA controls, auditability, and support ownership, budget drift is likely.

Should we augment our team or outsource the whole product?

Choose the model that matches your operating gap. Team augmentation fits when internal product and technical leadership are strong, but the company needs added capacity in interoperability, mobile, data engineering, AI implementation, or test automation. A fuller delivery model is safer when architecture ownership, release governance, vendor coordination, and post-launch support are still undefined.

This is an operating decision as much as a staffing decision. In digital health, the wrong model creates delays in security reviews, unclear accountability in incidents, and expensive handoffs between build and maintenance teams.

When does a dedicated team make sense?

A dedicated team makes sense when the product roadmap extends well past launch and the primary challenge is continuity. That is common in healthcare. Requirements shift with payer rules, clinical workflows change, integrations need maintenance, and every release has a downstream compliance impact.

In that setting, stable team context matters. Engineers who already understand your PHI boundaries, audit requirements, architecture decisions, and release process make fewer risky assumptions than a rotating project squad.

How do we know a partner understands healthcare and not just software?

Ask them to connect engineering choices to operational consequences. A credible healthtech partner should be able to explain how architecture affects HIPAA safeguards, how AI features change validation and monitoring requirements, how integration design affects support load, and how the engagement model supports incident response after go-live.

Generic firms usually answer those topics one by one. Experienced healthcare teams connect them because they have lived the trade-offs.

Bridge Global works with organizations that need AI-driven software delivery, compliant healthtech engineering, integration support, and long-term product execution. Review the fit against your product stage, risk profile, and team model, then proceed only if the delivery approach is strong enough to support the product after launch.